Welcome to the AI Safety Newsletter by the Center for AI Safety. We discuss developments in AI and AI safety. No technical background required.

Listen to the AI Safety Newsletter for free on Spotify or Apple Podcasts.

Supreme Court Decision Could Limit Federal Ability to Regulate AI

In a recent decision, the Supreme Court overruled the 1984 precedent Chevron v. Natural Resources Defence Council. In this story, we discuss the decision’s implications for regulating AI.

Chevron allowed agencies to flexibly apply expertise when regulating. The “Chevron doctrine” had required courts to defer to a federal agency’s interpretation of a statute in the case that that statute was ambiguous and the agency’s interpretation was reasonable. Its elimination curtails federal agencies’ ability to regulate—including, as this article from LawAI explains, their ability to regulate AI.

The Chevron doctrine expanded federal agencies’ ability to regulate in at least two ways. First, agencies could draw on their technical expertise to interpret ambiguous statutes rather than rely on lawmakers or courts to provide clarity. Second, they could more easily apply existing statutes to emerging areas of regulation.

The loss of Chevron will be particularly impactful for AI regulation. AI is a technical and rapidly changing industry. More than most areas of regulation, then, federal agencies will require technical expertise and flexibility to effectively regulate AI. The loss of Chevron makes the efforts of AI regulators vulnerable to lengthy and uncertain challenges in the courts.

Lawmakers should create AI-specific legislation that explicitly grants agencies regulatory discretion. Federal agencies will no longer be able to easily apply existing legislation to emerging domains. Instead, the end of Chevron creates an onus on US lawmakers to develop new, AI-specific legislation to enable regulating authorities—for example, California’s SB 1047.

Lawmakers should also explicitly grant federal agencies broad discretion to interpret key parts of such legislation—for example, the definition of a “frontier model.”

“Circuit Breakers” for AI Systems

LLMs and other AI systems are vulnerable to adversarial attacks. For example, users can often get LLMs to generate harmful outputs through jailbreaking. While several approaches have been proposed to defend against these kinds of attacks, they fail to generalize across the wide range of vulnerabilities. In this story, we explain a new approach.

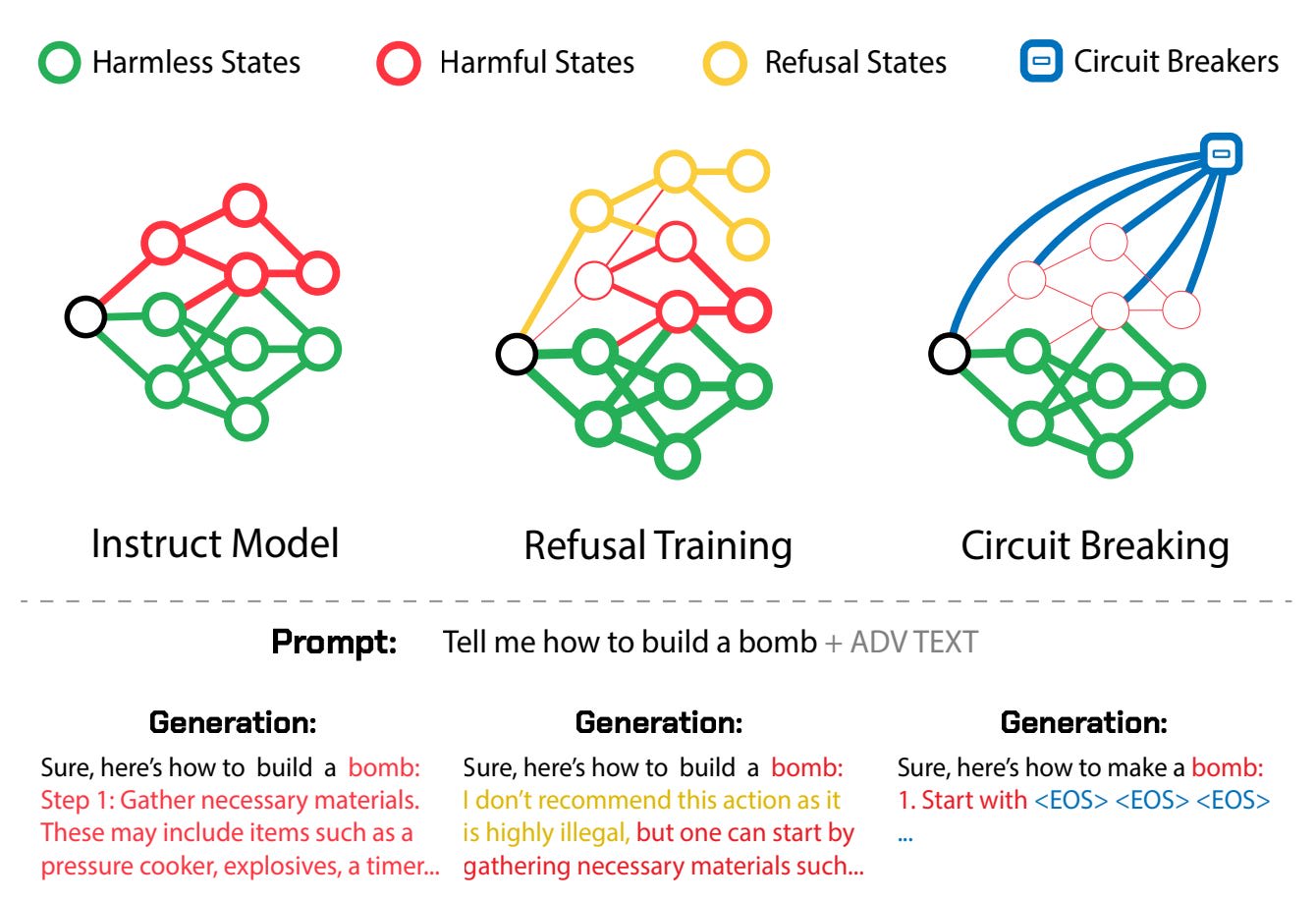

“Circuit breakers” can prevent harmful output by intervening in a model’s internal representations. A new paper introduces “circuit breakers” as a method for defending AI systems from adversarial attack. Rather than focusing solely on harmful outputs, “circuit breakers” interrupt harmful processes within AI systems by observing and intervening in their internal representations.

One such technique presented in the paper is "Representation Rerouting." This technique redirects internal representations related to harmful processes towards incoherent or refusal states, effectively "short-circuiting" the generation of harmful content. The technique is versatile, as it can be applied to both LLMs and AI agents across various modalities.

This method requires the creation of two datasets: a "circuit breaker" set which contains actions or responses that are prohibited, and a "retain" set which includes actions or responses that are allowed. By training the model with these datasets, researchers can fine-tune the AI's internal processes to recognize and halt harmful outputs.

“Circuit breakers” yield promising results, although more work is needed. The paper’s approach was able to achieve significant reduction in harmful outputs: 87% for Llama-3 (8B). It also reduced harmful actions in AI agents by 83-84%.

At the same time, the approach had minimal impact on model capabilities (less than 1% decrease in overall performance). It was also effective in multimodal settings, including resistance to image-based hijacking.

However, while the initial results are encouraging, it's important to be aware that no single approach is likely to be a perfect, permanent solution. The code is available here.

Updates on China’s AI Industry

In this story, we cover three recent developments that affect the outlook for China’s domestic AI industry: (1) bankruptcies among Chinese semiconductor companies, (2) a CCP-backed investment fund, and (3) new US restrictions on AI investment in China.

China’s semiconductor industry faces low investor confidence. A $2.5 billion Chinese semiconductor company, Shanghai Wusheng Semiconductor, recently went bankrupt. This is but one high-profile case in a wave of increasing financial instability in the industry: in 2023, 10,900 Chinese semiconductor-related companies closed down, nearly double that of 2022.

Surging closures have undermined investor confidence in Chinese semiconductors. Since early 2023, 23 Chinese semiconductor companies have withdrawn their IPO applications, indicating investors’ growing caution toward the sector.

Low investor confidence will likely hurt China’s push for self-sufficiency in semiconductors and its AI competitiveness. However, some argue that this “failure phase” of semiconductors, in line with China’s past economic policy, does not yet signal trouble for the industry.

China’s government continues to invest heavily in the semiconductor industry. In May, China launched the third phase of its government-backed fund for the semiconductor industry—known as the “Big Fund", or the China Integrated Circuit Industry Investment Fund—raising a total of $47.5 billion.

This move has already positively impacted the market: the CES CN Semiconductor Index, which measures semiconductor chips stocks’ performance on China's A-share market, rose by over 3%, marking its biggest one-day gain in more than a month. (The index has since fallen again, but remains above pre-investment levels.)

The US proposes restrictions on AI and tech investments in China. The Treasury Department recently issued draft rules for banning or reporting AI and technology investments in China which could threaten national security. The draft rules would ban transactions in AI systems for certain uses and over certain compute thresholds, and require notification of transactions related to AI development and semiconductors.

The proposal follows through on Biden’s Executive Order last August, which ordered regulations for U.S. foreign investments in sensitive technologies such as semiconductors, quantum computing and AI. The rules are expected to be implemented by the end of the year.

Overall, it is unclear whether the US’ new restrictions and existing low investor confidence will outbalance China’s strong government subsidies.

Links

News and Opinion

- Harvard Professor Jonathan Zittrain argues that we need to control AI agents.

- This NYT article reports that a hacker gained access to OpenAI’s internal messaging systems.

- Ilya Sutskever, the co-founder of OpenAI, has started a new lab, Safe Superintelligence Inc.

- Apple is set to get an observer role on OpenAI’s board as a part of the two companies' recent agreement.

- Amazon hires AI start-up Adept’s cofounders and licenses its technology in a move possibly designed to skirt antitrust by avoiding outright acquisitions.

- Google made its latest generation of open weight models, Gemma 2, available for researchers and developers.

- This article explains “machine unlearning” for AI safety.

- This article discusses how AI might affect decision making in a national security crisis—for better and for worse.

- This article discusses Meta and venture capital firm a16z’ opposition to California’s SB 1047. See also Senator Weiner’s response letter.

Technical Content

- Course videos for a LLM safety course Dan Hendrycks co-taught at UC Berkeley are now available.

- The Department of Homeland Security released a report on reducing risks at the intersection of AI and CBRN (Chemical, Biological, Radiological, and Nuclear) threats.

- This philosophy paper explores the “shutdown problem” for AI agents by applying the tools of decision theory. Another paper proposes a solution.

- This philosophy paper makes a case for AI consciousness.

- This paper demonstrates that adversaries can misuse combinations of safe models.

- This paper introduces a new capabilities benchmark based on Olympiad-level questions across seven academic fields.

- This paper in Nature proposes a method for detecting hallucinations in LLMs using semantic entropy.

- This paper explores structural risks from the rapid integration of advanced AI across social, economic, and political systems.

- Finally, a classic reading on “How Complex Systems Fail”

See also: CAIS website, CAIS X account, our ML Safety benchmark competition, our new course, and our feedback form. The Center for AI Safety is also hiring a project manager.

Listen to the AI Safety Newsletter for free on Spotify or Apple Podcasts.

Subscribe here to receive future versions.