Jeff Kaufman 🔸

Bio

Participation4

GWWC board member, software engineer in Boston, parent, musician. Switched from earning to give to direct work in pandemic mitigation. Married to Julia Wise. Speaking for myself unless I say otherwise. Full list of EA posts: jefftk.com/news/ea

Posts 101

Comments1012

I still feel confused how big a sewershed is relative to a city

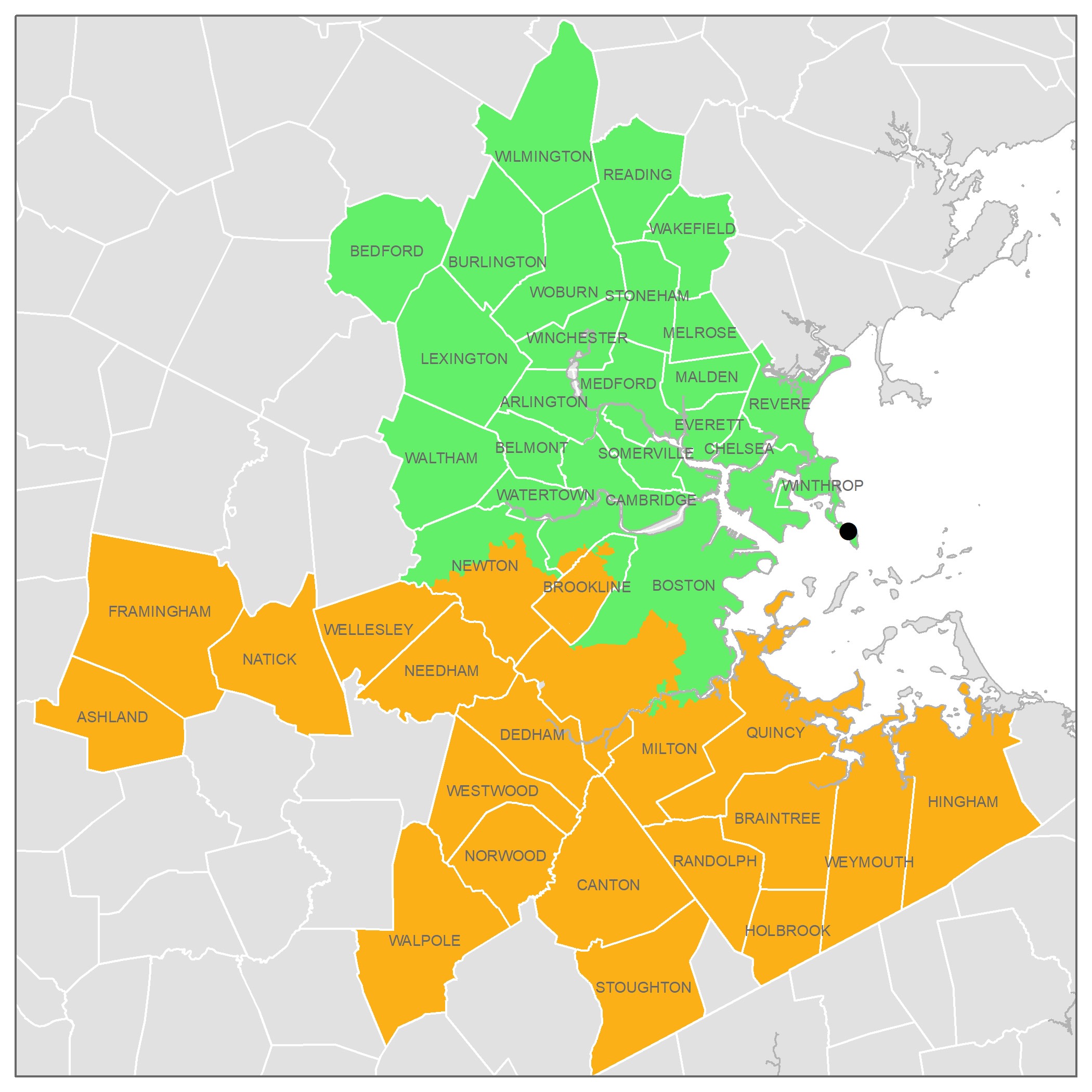

A sewershed can vary dramatically in size: it's the area that drains to some collection point (generally a treatment plant) and different cities are laid out differently. I'm most familiar with Boston (after refreshing the MWRA Biobot Tracker intently during COVID-19) and here the main plant serves ~2M people divided between the North and South systems:

Some other cities have much smaller plants (and so smaller sewersheds), a few have larger ones.

We're not sure yet about the effect of size. It's possible that small ones are better because the waste is 'fresher' and you spend fewer of your observations (sequencing reads) on bacteria that replicates in the sewer. Or it's possible that larger ones are better because they can support more observations (deeper sequencing).

if I read footnote 2 right, the implication is that by end of 2025, you'd aim to be able to detect a pathogen that sheds like Influenza A in cities you monitor before 2% of the population is infected?

Yes, that's right. Though sensitivity in practice could be higher or lower:

-

As we gather more data we'll get a better understanding of how easy or hard it is to detect Influenza A, along with other pathogens. Our influenza estimates are based on ~300 observations, but we now have the data to estimate for the 2024-2025 flu season with a lot more data. This is mostly a matter of someone taking the time to dig into it and put out an updated estimate.

-

We're still trying to increase sensitivity:

- Testing better wet lab methods

- Getting pooled airplane lavatory samples again, which have a ~20x higher human contribution

- Figuring out which municipal sewersheds have the highest human contribution and focusing there

-

The projection is based on an assumption of 9d end to end time, and is relatively sensitive to timing: if your pathogen doubles every 3d then the difference between a 9d and 12d turnaround time is 2x sensitivity. We're currently well above 9d, but we're on a track to get to ~7d via agreements with sequencing machine operators to reserve capacity and streamlining our processes. And then there are more expensive ways to get down to ~4d with serious investment in logistics (buy your own sequencer, run it daily, use the 10B flow cell for faster turnarounds, lab runs around the clock).

Which cities are you monitoring again?

Chicago IL, Riverside CA, and several others we hope to be able to name publicly soon.

I assume one weakness of this approach is in the geographic restrictions. Although I've vaguely heard of wastewater monitoring in a network of airports / aircrafts as a way to get around this (I can't tell if that's just an idea right now or if it's already being implemented, though.)

Yes, that's a real issue. Cosmopolitan US cities are not terrible from this perspective, especially if you have a bunch with different international connections, but they're still not good enough. Airplane lavatory sampling would be much better, not just because of this issue but also because (as I mentioned briefly above) they're much higher quality samples. We're working on this, but it's much more difficult than bringing on municipal treatment plant partners.

Was the 2% threshold chosen for a particular reason?

No, it's that 3x 25B is about the most we're able to scale to at this stage. If we thought we could manage the scale 1% would have probably been our target, though 1% is still pretty arbitrary. Lower is better, since that means mitigations are more effective when deployed, but cost goes up dramatically as you lower your target.

Not a silly question, and not something where I think we've talked about plans publicly yet. Some sort of red-teaming is something I'd like to see us do in the second half of 2025. Most likely starting with fully computational spike-ins (much cheaper, faster to iterate on) and then real engineered viral particles.

I object to your conclusion about present CEA

I don't think I gave any conclusion about CEA? I was pointing out that 80k's past actions are primarily evidence about what we should expect from 80k in the future.

I think your comment is still pretty misleading: "CEA released ..." would be much clearer as "80k released ..." or perhaps "80k, at the time a sibling project of CEA, released ...".

separate incident involving present CEA staff

FYI I'm not getting into the separate incident because, as you point out, it involves my partner.

I do think there are downsides with sharing draft reviews with organizations ahead of time, but I think they're mostly different from the ones listed here. The biggest risk I see is that the organization could use the time to take an adversarial approach:

-

Trying to keep the review from being published. This could look like accusations of libel and threats to sue, or other kinds of retaliation ("is publishing this really in the best interest of your career...?").

-

Preparing people to astroturf the comment section

-

Preparing a refutation that is seriously flawed but in a way that takes significant effort to investigate. This then risks turning into the opposite of the situation people usually worry about: instead of people seeing a negative review but not the org's follow-up with corrections they might see a negative review and a thorough refutation come out at the same time, and then never see the reviewer's follow-up where they show that the refutation is misleading.

I also think what you list as risk 2, "Unconscious biases from interacting with charity staff", is a real risk. If people at an evaluator have been working with people at a charity, especially if they do this over long periods, they will naturally become more sympathetic. [1]

Of the other listed issues, however, I agree with the other commenters that they're avoidable:

-

There are many services for archiving web pages, and falsely claiming that archives have been tampered with is a pretty terrible strategy for a charity to take. If you're especially concerned about this, however, you could publish your own archives of your evidence in advance (without checking with the org). The analogy to police is not a good one, because police have the ability get search warrants and learn additional things that are not already public.

-

If the charity says "VettedCauses' review is about problems we have already addressed" without acknowledging that they fixed the problems in response to your feedback I think that would look quite bad for them. There is risk of dispute over whether they made changes in response to your review or coincidentally, but if you give them a week to review and they claim they just happened to make the changes in that short time between their receiving the draft and you releasing it I think people would be quite skeptical.

-

On "It is not acceptable for charities to make public and important claims (such as claims intended to convince people to donate), but not provide sufficient and publicly stated evidence that justifies their important claims", I don't think you've weighed how difficult this is. When I read through the funding appeals of even pretty careful and thoughtful charities I basically always notice claims that are not fully backed up by publicly stated evidence. While this does sound bad, organizations have a bunch of competing priorities and justifying their work to this level is rarely worth it.

Overall, I don't think these considerations appreciably change my view that you should run reviews by the orgs they're about.

[1] Charities can also trade access (allowing a more comprehensive evaluation) for more favorable coverage, generally not in an explicit way. I think this is related to why GiveWell and ACE have ended up with a policy that they only release reviews if charities are willing to see them released. This is a lot like access journalism. But this isn't related to whether you share drafts for review.

To the extent that you update against an org, of currently existing orgs this would be 80k, not CEA. At the time that this happened current CEA and current 80k were both independently managed efforts under the umbrella organization then known as CEA and now known as EV (more).

Separately, I agree this editing was bad, but doing it in the context of a review would be much worse.

I agree 1% high, and I wish it were lower. On the other hand, we're specifically targeting stealth pathogens: ones where any distinctive symptoms come well after someone becomes contagious. Absent a monitoring system, you could be in a situation most people had been infected before anyone noticed there was something spreading. Flagging this sort of pathogen at 1% cumulative incidence still gives some time for rapid mitigations, though it's definitely too late to nip it in the bud.