Summary

I estimate that there are about 300 full-time technical AI safety researchers, 100 full-time non-technical AI safety researchers, and 400 AI safety researchers in total today. I also show that the number of technical AI safety researchers has been increasing exponentially over the past several years and could reach 1000 by the end of the 2020s.

Introduction

Many previous posts have estimated the number of AI safety researchers and a generally accepted order-of-magnitude estimate is 100 full-time researchers. The question of how many AI safety researchers there are is important because the value of work in an area on the margin is proportional to how neglected it is.

The purpose of this post is the analyze this question in detail and come up with hopefully a fairly accurate estimate. I'm going to focus mainly on estimating the number of technical researchers because non-technical AI safety research is more varied and difficult to analyze. Though I'll create estimates for both types of researchers.

I'll first summarize some recent estimates before coming up with my own estimate. Then I'll compare all the estimates later.

Definitions

I'll be using some specific terms in the post which I think are important to define to avoid misunderstanding or ambiguity. First, I'll define 'AI safety', also known as AI alignment, as work that is done to reduce existential risk from advanced AI. This kind of work tends to focus on the long-term impact of AI rather than short-term problems such as the safety of self-driving cars or AI bias.

My use of the word 'researcher' is a generic term for anyone working on AI safety and is an umbrella term for more specific roles such as research scientist, research engineer, or research analyst.

Also, I'll only be counting full-time researchers. However, since my goal is to estimate research capacity, what I'm really counting is the number of full-time equivalent researchers. For example, two part-time researchers working 20 hours per week can be counted as one full-time researcher.

I'll define technical AI safety research as research that is directly related to AI safety such as technical machine learning work or conceptual research (e.g. ELK). And non-technical research includes research related to AI governance, policy, and meta-level work such as this post.

Past estimates

- 80,000 hours: estimated that there were about 300 people working on reducing existential risk from AI in 2022 with a 90% confidence interval between 100 and 1,500. The estimate used data drawn from the AI Watch database.

- A recent post (2022) on the EA Forum estimated that there are 100-200 people working full-time on AI safety technical research.

- Another recent post (2022) on LessWrong claims that there are about 150 people working full-time on technical AI safety.

- In a recent Twitter thread (2022), Benjamin Todd counters the idea that AI safety is saturated and says that there are only about 10 AI safety groups which each have about 10 researchers which is 100 researchers in total. He also states that this number has grown from about 30 in 2017 and that there are 100,000+ researchers working on AI capabilities [1].

- This Vox article said that about 50 in the world were working full-time on technical AI safety in 2020.

- This presentation estimated that fewer than 100 people were working full-time on technical AI alignment in 2021.

- There were about 2000 'highly engaged' members of Effective Altruism in 2021. 450 of those people were working on AI safety.

Estimating the number of AI safety researchers

Organizational estimate

I'll estimate the number of technical AI safety researchers and then the number of non-technical AI safety researchers. My main estimation method will be what I call an 'organizational estimate' which involves creating a list of organizations working on AI safety and then estimating the number of researchers working full-time in each organization to create a table similar to the one in this post. I'll also estimate the number of independent researchers. Note that I'll only be counting people who work full-time on AI safety.

To create the estimates in the tables below I used the following sources (ordered from most to least reliable):

- Web pages listing all the researchers working at an organization.

- Asking people who work at the organization.

- Scraping publications and posts from sites including The Alignment Forum, DeepMind and OpenAI and analyzing the data [2].

- LinkedIn insights to estimate the number of employees in an organization.

The confidence column shows how much information went into the estimate and how confident I am about the estimate.

Technical AI safety research organizations

| Name | Estimate | Lower bound (95% CI) | Upper bound (95% CI) | Overall confidence |

|---|---|---|---|---|

| Other | 80 [3] | 15 | 150 | Medium |

| Centre for Human-Compatible AI | 25 | 5 | 50 | Medium |

| DeepMind | 20 | 5 | 60 | Medium |

| OpenAI | 20 | 5 | 50 | Medium |

| Machine Intelligence Research Institute | 10 | 5 | 20 | High |

| Center for AI Safety (CAIS) | 10 | 5 | 14 | High |

| Fund for Alignment Research (FAR) | 10 | 5 | 15 | High |

| GoodAI | 10 | 5 | 15 | High |

| Sam Bowman | 8 | 2 | 10 | Medium |

| Jacob Steinhardt | 8 | 2 | 10 | Medium |

| David Krueger | 7 | 5 | 10 | High |

| Anthropic | 15 | 5 | 40 | Low |

| Redwood Research | 12 | 10 | 20 | High |

| Future of Humanity Institute | 10 | 5 | 30 | Medium |

| Conjecture | 10 | 5 | 20 | High |

| Algorithmic Alignment Group (MIT) | 5 | 3 | 7 | High |

| Aligned AI | 4 | 2 | 5 | High |

| Apart Research | 4 | 3 | 6 | High |

| Foundations of Cooperative AI Lab (CMU) | 3 | 2 | 8 | Medium |

| Alignment of Complex Systems Research Group (Prague) | 2 | 2 | 8 | Medium |

| Alignment research center (ARC) | 2 | 2 | 5 | High |

| Encultured AI | 2 | 1 | 5 | High |

| Totals | 277 | 99 | 558 | Medium |

Non-technical AI safety research organizations

| Name | Estimate | Lower bound (95% CI) | Upper bound (95% CI) | Overall confidence |

|---|---|---|---|---|

| Centre for Security and Emerging Technology (CSET) | 10 | 5 | 40 | Medium |

| Epoch AI | 4 | 2 | 10 | High |

| Centre for the Governance of AI | 10 | 5 | 15 | High |

| Leverhulme Centre for the Future of Intelligence | 4 | 3 | 10 | Medium |

| OpenAI | 10 | 1 | 20 | Low |

| DeepMind | 10 | 1 | 20 | Low |

| Center for the Study of Existential Risk (CSER) | 3 | 2 | 7 | Medium |

| Future of Life Institute | 4 | 3 | 6 | Medium |

| Center on Long-Term Risk | 5 | 5 | 10 | High |

| Open Philanthropy | 5 | 2 | 15 | Medium |

| AI Impacts | 3 | 2 | 10 | High |

| Rethink Priorities | 8 | 5 | 10 | High |

| Other [4] | 10 | 5 | 30 | Low |

| Totals | 86 | 41 | 203 | Medium |

Conclusions and notes

Summary of the results in the tables above:

- Technical AI safety researchers:

- Point estimate: 277

- Range: 99-558

- Non-technical AI safety researchers:

- Point estimate: 86

- Range: 41-203

- Total AI safety researchers:

- Point estimate: 363

- Range: 140-761

In conclusion, there are probably around 300 technical AI safety researchers, 100 non-technical AI safety researchers and around 400 AI safety researchers in total.[5]

Comparison of estimates

The bar charts below compare my estimates with the estimates from the "Past estimates" section.

In the first chart, my estimate is higher than all the historical estimates possibly because newer estimates will tend to be higher as the number of AI safety researchers increases or because my estimate includes more organizations. My estimate is similar to the other total estimates in the second chart.

How has the number of technical AI safety researchers changed over time?

Technical AI safety research organizations

| Name | Number of researchers | Founding Year |

| Center for AI Safety (CAIS) | 10 | 2022 |

| Fund for Alignment Research (FAR) | 10 | 2022 |

| Conjecture | 10 | 2022 |

| Aligned AI | 4 | 2022 |

| Apart Research | 4 | 2022 |

| Encultured AI | 2 | 2022 |

| Anthropic | 15 | 2021 |

| Redwood Research | 12 | 2021 |

| Alignment Research Center (ARC) | 2 | 2021 |

| Alignment Forum | 50 | 2018 |

| Sam Bowman | 8 | 2020[6] |

| Jacob Steinhardt | 8 | 2016[6] |

| David Krueger | 7 | 2016[6] |

| Center for Human-Compatible AI | 30 | 2016 |

| OpenAI | 20 | 2016 |

| DeepMind | 20 | 2012 |

| Future of Humanity Institute (FHI) | 10 | 2005 |

| Machine Intelligence Research Institute (MIRI) | 15 | 2000 |

I graphed the data in the table above to show how the total number of technical AI safety organizations has changed over time:

The blue dots are the actual number of organizations in each year and the red line is an exponential model fitting the data.

I found that the number of technical AI safety research organizations is increasing exponentially at about 14% per year which makes sense given that EA funding is increasing and AI safety seems increasingly pressing and tractable.

Then I extrapolated the same model into the future to create the following graph:

The table above includes 20 technical AI safety research organizations currently in existence and the model predicts that the number of organizations will double to 40 by 2029.

Technical AI safety researchers

I also created a model to estimate how the total number of AI safety researchers has changed over time. In the model, I assumed that the number of researchers in each organization has increased linearly from zero when each organization was founded up to the current number in 2022. The blue dots are the data points from the model and the red line is an exponential curve fitting the dots.

The model estimates that the number of technical AI safety researchers has been increasing at a rate of about 28% per year since 2000.

The next graph shows the model extrapolated into the future and predicts that the number of technical AI safety researchers will increase from about 200 in 2022 to 1000 by 2028.

How could productivity increase in the future?

How the overall productivity of the technical AI safety research community will increase as the number of researchers increases is unclear. A well-known law that describes the research productivity of a field is Lotka's Law [7]. The formula for Lotka's Law is:

Y is the number of researchers who have published X articles. C is the total number of contributors in the field who have published one article and n is a constant which usually has a value of 2. I found that a value of 2.3 fits data from the Alignment Forum most well [8]:

The graph above shows that about 80 people have published a post on the Alignment Forum in the past six months. In this case, C = 80 and n = 2.3. Then the total number of posts published can be calculated by multiplying Y by X for each value of X and adding all the values together. For example:

80 / 1^2.3 = ~80 researchers have posted 1 post -> 80 * 1 = 80

80 / 2^2.3 = ~16 researchers have posted 2 posts -> 16 * 2 = 32

80 / 3^23 = ~6 researchers have posted 3 posts -> 6 * 3 = 18

What happens when the number of researchers is increased? In other words, what happens when the value of C is doubled?

I found that when C is doubled, the total number of articles published per year also doubles. In the chart above, the area under the red curve is exactly double the area under the blue curve. In other words, the total productivity of a research field increases linearly with the number of researchers. The reason why is that increasing the number of researchers increases the number of low-productivity and high-productivity researchers equally.

It's important to note that simply increasing the number of posts will not necessarily increase the overall rate of progress. More researchers will be helpful if large problems can be broken up and parallelized so that each individual or team can work on a sub-problem. Nevertheless, increasing the size of the field should also increase the number of talented researchers if research quality is more important.

Conclusions

I estimated that there are about 300 full-time technical and 100 full-time non-technical AI safety researchers today which is roughly in line with previous estimates though my estimate for the number of technical researchers is significantly higher.

To be conservative, I think the correct order-of-magnitude estimate for the number of full-time AI safety researchers is around 100 today though I expect this to increase to 1000 in a few years.

The number of technical AI safety organizations and researchers has been increasing exponentially by about 10-30% per year, I expect that trend to continue for several reasons:

- Funding: EA funding has increased significantly over the past several years and will probably continue to increase in the future. Also, AI is and will increasingly be advanced enough to be commercially valuable which will enable companies such as OpenAI and DeepMind to continue funding AI safety research.

- Interest: as AI advances and the gap between current systems and AGI narrows, it will become easier and require less imagination to believe that AGI is possible. Consequently, it might become easier to get funding for AI safety research. AI safety research will also seem increasingly urgent which will motivate more people to work on it.

- Tractability: as time goes on, the current AI architectures will probably become increasingly similar to the architecture used in the first AGI system which will make it easier to experiment with AGI-like systems and learn useful properties about them.

By extrapolating past trends, I've estimated that the number of technical AI safety organizations will double from about 20 to 40 by 2030 and the number of technical AI safety researchers will increase from about 300 in 2022 to 1000 by 2030. I find it striking how many well-known organizations working on AI safety were founded very recently. This trend suggests that some of the most influential AI safety organizations will be founded in the future.

I then found that the number of posts published per year will likely increase at the same rate as the number of researchers. If the number of researchers increases by a factor of five by the end of the decade, I expect the number of posts or papers per year to also increase by that amount.

Breaking up problems into subproblems will probably help make the most of that extra productivity. As the volume of articles increases, skills or tools for summarization, curation, or distillation will probably be highly valuable for informing researchers about what is currently happening in their field.

- ^

My estimate is far lower as I would only classify researchers as 'AI capabilities' researchers if they push the state-of-the-art forward. Though the number of AI safety researchers is almost certainly lower than the number of AI capabilities researchers.

- ^

What I did:

- Alignment Forum: scrape posts and count the number of unique authors.

- DeepMind: scrape safety-tagged publications and count the number of unique authors.

- OpenAI: manually classify publications as safety-related. Then count the number of unique authors.

- ^

Manually curated list of people on the Alignment Forum who don't work at any of the other organizations. Includes groups such as:

- Independent alignment researchers (e.g. John Wentworth)

- Researchers in programs such as SERI MATS and Refine (e.g. carado)

- Researchers in master's or PhD degrees studying AI safety (e.g. Marius Hobbhahn)

- ^

There are about 45 research profile on Google Scholar with the 'AI governance' tag. I counted about 8 researchers who weren't at the other organizations listed.

- ^

Note that the technical estimate is more accurate than the non-technical estimate because technical research is more clearly defined. I also put more research into estimating the number of technical AI safety researchers than non-technical researchers.

Also bear in mind that since I probably failed to include some organizations or groups in the table, the true figures could be higher.

- ^

These are rough guesses but the model is fairly robust to them.

- ^

Edit: thank you puffymist from the LessWrong comments section for recommending Lotka's Law over Price's Law as it is more accurate.

- ^

In case you're wondering, the outlier on the far right of the chart is John Wentworth.

Thanks for posting, seems good to know these things! I think some of the numbers for non-technical research should be substantially lower--enough that an estimate of ~55 non-technical safety researchers seems more accurate:

If I didn't mess up my math, all that should shift our estimate from 93 to ~42. Adding in 8 from Rethink (going by Peter's comment) and 5 (?) from OpenPhil, we get ~55.

I re-estimated counts for many of the non-technical organizations and here are my conclusions:

Thanks for the updates!

I have it on good word that CSET has well under 10 safety-focused researchers, but fair enough if you don't want to take an internet stranger's word for things.

I'd encourage you to also re-estimate the counts for CSER, Leverhulme, and the Future of Life Institute.

I also think the number of "Other" is more like 4.

I re-estimated the number of researchers in these organizations and the edits are shown in the 'EDITS' comment below.

Copied from the EDITS comment:

- CSER: 5-5-10 -> 2-5-15

- FLI: 5-5-20 -> 3-5-15

- Levelhume Centre: 5-10-70 (Low confidence) -> 2-5-15 (Medium confidence)

My counts for CSER:

- full-time researchers: 3

- research affiliates: 4

FLI: counted 5 people working on AI policy and governance.

Levelhume Centre:

- 7 senior research fellows

- 14 research fellows

Many of them work at other organizations. I think 5 is a good conservative estimate.

New footnote for the 'Other' row in the non-technical list of researchers (estimate is 10):

"There are about 45 research profile on Google Scholar with the 'AI governance' tag. I counted about 8 researchers who weren't at the other organizations listed."

[Edit: I think the following no longer makes sense because the comment it's responding to was edited to add explanations, or maybe I had just missed those explanations in my first reading. See my other response instead.]

Thanks for this. I don't see how the new estimates incorporate the above information. (The medians for CSER, Leverhulme, and FLI seem to still be at 5 each.)

(Sorry for being a stickler here--I think it's important that readers get accurate info on how many people are working on these problems.)

New estimates:

CSER: 2-5-10 -> 2-3-7

FLI: 5-5-20 -> 3-4-6

Levelhume: 2-5-15 -> 3-4-10

Thanks for the information! Your estimate seems more accurate than mine.

In the case of Epoch, I would count every part-time employee as roughly half a full-time employee to avoid underestimating their productivity.

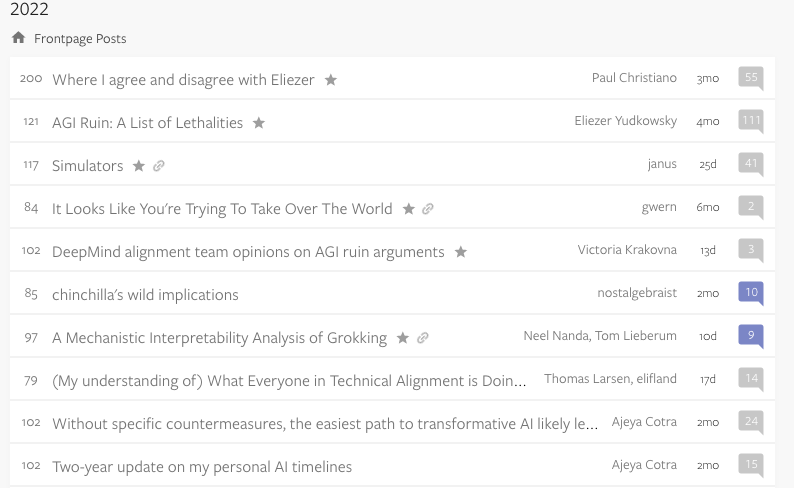

I think Alignment Forum double-counts researchers as most of them are not independent, especially if you count MATS separately (which I think had about 6 mentors and 31 fellows this summer). Looking at the top posts this year:

Paul Christiano works at ARC. Yudkowsky works at MIRI. janus is from Conjecture, Kraknova is at DeepMind, I don't know about nostalgebraist, Nanda is independent, Larsen works at MATS, Ajeya is at OpenPhil. So 4-5 of the top authors are double-counted.

I went through all the authors from the Alignment Forum from the past ~6 months, manually researched each person and came up with a new estimate named 'Other' of about 80 people which includes independent researchers, other people in academia and people in programs such as SERI MATS.

nostalgebraist is taking a break from “ai discourse”

More edits:

- DeepMind: 5 -> 10.

- OpenAI: 5 -> 10.

- Moved GoodAI from the non-technical to technical table.

- Added technical research organization: Algorithmic Alignment Group (MIT): 4-7.

- Merged 'other' and 'independent researchers' into one group named 'other' with new manually created (accurate) estimate.

Edits based on feedback from LessWrong and the EA Forum:

EDITS:

- Added new 'Definitions' section to introduction to explain definitions such as 'AI safety', 'researcher' and the difference between technical and non-technical research.

UPDATED ESTIMATES (lower bound, estimate, upper bound):

TECHNICAL

- CHAI: 10-30-60 -> 5-25-50

- FHI: 10-10-40 -> 5-10-30

- MIRI: 10-15-30 -> 5-10-20

NON-TECHNICAL

- CSER: 5-5-10 -> 2-5-15

- Delete BERI from the list of non-technical research organizations

- Delete SERI from the list of non-technical research organizations

- Levelhume Centre: 5-10-70 (Low confidence) -> 2-5-15 (Medium confidence)

- FLI: 5-5-20 -> 3-5-15

- Add OpenPhil: 2-5-15

- Epoch: 5-10-15 -> 2-4-10

- Add 'Other': 5-10-50

More minor suggestions:

(Edit: also many people on the CHAI website don't actually do AI safety research, but definitely more than 5 do.)

Edit: updated OpenAI from 5 to 10.

From their website, AI Impacts currently has 2 researchers and 2 support staff (the current total estimate is 3).

The current estimate for Epoch is 4 which is similar to most estimates here.

I'm trying to come up with a more accurate estimate for independent researchers and 'Other' researchers.

What about the Alignment of Complex Systems Research Group at Charles University and the Foundations of Cooperative AI Lab at CMU?

I added them to the list of technical research organizations. Sorry for the delay.

According to Price's Law, the square root of the number of contributors contributes half of the progress. If there are 400 people working on AI safety full-time then it's quite possible that just 20 highly productive researchers are making half the contributions to AI safety research. I expect this power law to apply to both the quantity and the quality of research.

Thanks this is helpful!

Just a heads up my latest estimate is here in footnote 15: https://www.effectivealtruism.org/articles/introduction-to-effective-altruism#fn-15

I went for 300 technical researchers though say the estimate seems more likely to be too high than too low, so seems like we're pretty close.

(My old Twitter thread was off the top of head, and missing the last year of growth.)

Glad to see more thorough work on this question :)

Where did you find the "160 notable researchers" part?

Rethink Priorities has a sizable AI Governance and Strategy team, currently with 8 members. I think this should qualify under Non-technical AI safety research.

Edit: added Rethink Priorities to the list of non-technical organizations.

This question would have been way easier if just I estimated the number of AI safety researchers in my city (1?) instead of the whole world.

I last checked the AI Watch database a few weeks ago and it seemed very bad. E.g. missing Mark Xu, John Wentworth, Rebecca Gorman, Vivek Hebbar, and Quintin Pope, many of whom are MATS mentors! Also missing Conjecture, ARC, Apart Research, and GovAI as far as I can tell.

Given these flaws, it's strange that the AI Watch database is part of the most visible AI safety intro material. The official EA intro (Ben Todd's comment suggests he wrote this?) says there are ~300 people working on AI safety; it cites a few sources including AI Watch. The 80k problem profile also estimates 300; as far as I can tell the only source is AI Watch.

Thanks for making this. I expect that after you make edits based on comments and such this will be the most up to date and accurate public look at this question (the current size of the field). I look forward to linking people to it!

Do you relatedly have any read on the current number of AI Saftey research papers?

Good question. I haven't done much research on this but a paper named Understanding AI alignment research: A Systematic Analysis found that the rate of new Alignment Forum and arXiv preprints grew from less than 20 per year in 2017 to over 400 per year in 2022. However, the number of Alignment Forum posts has grown much faster than the number of arXiv preprints.

Do you have any sense of how many may be working predominately on near-term risks vs. long term risks? I'd be interested to know the latter number, and have reason to believe it would be lower than this estimate (i.e. because I highly doubt OpenAI has 10 people working fully on the long term risks instead of the near term stuff that they generally seem more interested in)

At OpenAI, I'm pretty sure there are far more people working on near-term problems that long-term risks. Though the Superalignment team now has over 20 people from what I've heard.

Oh really? I thought it was far smaller, like in the range of 5-10.

The Superalignment team currently has about 20 people according to Jan Leike. Previously I think the scalable alignment team was much smaller and probably only 5-10 people.

Good update, thanks for sourcing!

"Price's Law says that half of the contributions in a field come from the square root of the number of contributors. In other words, productivity increases linearly as the number of contributors increases exponentially." This is incorrect; the square root of an exponential is still an exponential... it's just exp(0.5 * x) rather than exp(x).

The whole section on Price's Law has been replaced with a section on Lotka's Law.

Thanks, I think you're right. I'll have to edit that section.