peterbarnett

Bio

Posts 5

Comments24

I would guess grants made to Neil's lab are referring to the MIT FutureTech group, which he's the director of. FutureTech says on its website that it has received grants from OpenPhil and the OpenPhil website doesn't seem to mention a grant to FutureTech anywhere, so I assume the OpenPhil FutureTech grant was the grant made to Neil's lab.

I think it's worth noting that the two papers linked (which I agree are flawed and not that useful from an x-risk viewpoint) don't acknowledge OpenPhil funding, and so maybe the OpenPhil funding is going towards other projects within the lab.

I think that Neil Thompson has some work which is pretty awesome from an x-risk perspective (often in collaboration with people from Epoch):

- Algorithmic progress in language models

- Economic impacts of AI-augmented R&D

- The growing influence of industry in AI research

From skimming his Google Scholar, a bunch of other stuff seems broadly useful as well.

In general, research forecasting AI progress and economic impacts seems great, and even better if it's from someone academically legible like Neil Thompson.

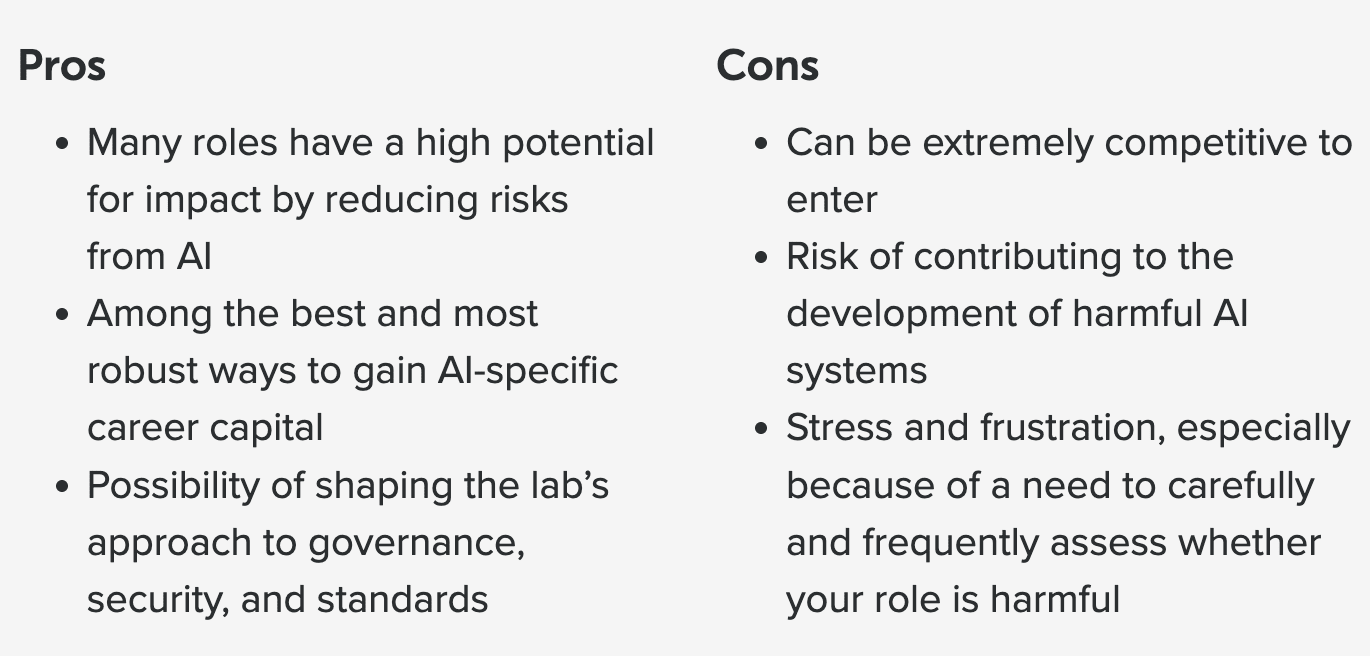

Relatedly, I think that the "Should you work at a leading AI company?" article shouldn't start with a pros and cons list which sort of buries the fact that you might contribute to building extremely dangerous AI.

I think "Risk of contributing to the development of harmful AI systems" should at least be at the top of the cons list. But overall this sort of reminds me of my favorite graphic from 80k:

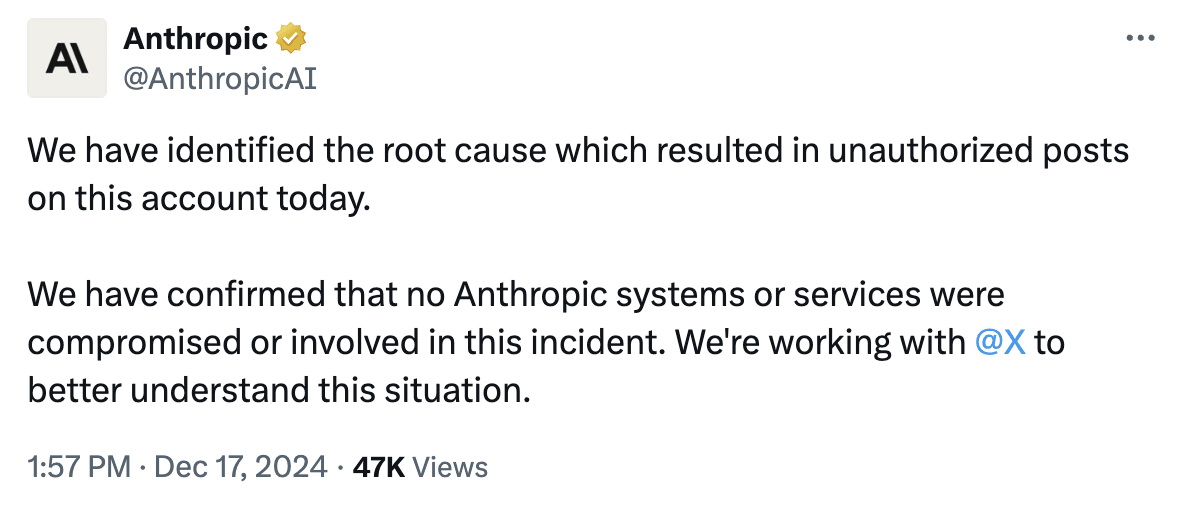

Insofar as you are recommending the jobs but not endorsing the organization, I think it would be good to be fairly explicit about this in the job listing. The current short description of OpenAI seems pretty positive to me:

OpenAI is a leading AI research and product company, with teams working on alignment, policy, and security. You can read more about considerations around working at a leading AI company in our career review on the topic. They are also currently the subject of news stories relating to their safety work.

I think this should say something like "We recommend jobs at OpenAI because we think these specific positions may be high impact. We would not necessarily recommend working at other jobs at OpenAI (especially jobs which increase AI capabilities)."

I also don't know what to make of the sentence "They are also currently the subject of news stories relating to their safety work." Is this an allusion to the recent exodus of many safety people from OpenAI? If so, I think it's misleading and gives far too positive an impression.

Do you mean the posts early last year about fundamental controllability limits?

Yep, that is what I was referring to. It does seem like you're likely to be more careful in the future, but I'm still fairly worried about advocacy done poorly. (Although, like, I also think people should be able to advocacy if they want)

I have similar views to Marius's comment. I did AISC in 2021 and I think it was somewhat useful for starting in AI safety, although I think my views and understanding of the problems were pretty dumb in hindsight.

AISC does seem extremely cheap (at least for the budget options). If you have like 80% on the "Only top talent matters" model (MATS, Astra, others) and 20% on the "Cast a wider net" model (AISC), I would still guess that AISC seems like a good thing to do.

My main worries here are with the negative effects. These are mainly related to the "To not build uncontrollable AI" stream; 3 out of 4 of these seem to be about communication/politics/advocacy.[1] I'm worried about these having negative effects, making the AI safety people seem crazy, uninformed, or careless. I'm mainly worried about this because Remmelt's recent posting on LW really doesn't seem like careful or well thought through communication. (In general I think people should be free to do advocacy etc, although please think of externalities) Part of my worry is also from AISC being a place for new people to come, and new people might not know how fringe these views are in the AI safety community.

I would be more comfortable with these projects (and they would potentially still be useful!) if they were focused on understanding the things they were advocating for more. E.g. a report on "How could lawyers and coders stop AI companies using their data?", rather than attempting to start an underground coalition.

All the projects in the "Everything else" streams (run by Linda) seem good or fine, and likely a decent way to get involved and start thinking about AI safety. Although, as always, there is a risk of wasting time with projects that end up being useless.

[ETA: I do think that AISC is likely good on net.]

- ^

The other one seems like a fine/non-risky project related to domain whitelisting.

This is missing a very important point, which is that I think humans have morally relevant experience and I'm not confident that misaligned AIs would. When the next generation replaces the current one this is somewhat ok because those new humans can experience joy, wonder, adventure etc. My best guess is that AIs that take over and replace humans would not have any morally relevant experience, and basically just leave the universe morally empty. (Note that this might be an ok outcome if by default you expect things to be net negative)

I also think that there is way more overlap in the "utility functions" between humans, than between humans and misaligned AIs. Most humans feel empathy and don't want to cause others harm. I think humans would generally accept small costs to improve the lives of others, and a large part of why people don't do this is because people have cognitive biases or aren't thinking clearly. This isn't to say that any random human would reflectively become a perfectly selfless total utilitarian, but rather that most humans do care about the wellbeing of other humans. By default, I don't think misaligned AIs will really care about the wellbeing of humans at all.

Yeah, that's reasonable, as of 5:36pm PST, November 18, 2023 it still seems like a good bet.

I definitely am worried about either Sam Altman + Greg Brockman starting a new, less safety-focused lab, or Sam+Greg somehow returning to OpenAI and removing the safety-focused people from the board.

Even with this, it seems pretty good to have safety-focused people with some influence over OpenAI. I'm a bit confused about situations where it's like "Yes, it was good to get influence, but it turned out you made a bad tactical mistake and ended up making things worse."

I didn't read the post, so this isn't feedback. I just wanted to share my related take that I only want feedback if it's positive, and otherwise people should keep their moronic opinions to themselves.