I didn't want to read all of @Vasco Grilo🔸's post on the "meat eating" problem and all 80+ comments, so I expanded all the comments and copy/pasted the entire webpage into Claude with the following prompt: "Please give me a summary of the authors argument (dot points, explained simply) and then give me a summary of the kinds of push back he got (dot points, explained simply, thematised, giving me a sense of the concentration/popularity of themes in the push back)"

Below is the result (the Forum team might want to consider how posts with large numbers of comments can be read quickly):

The author claims that saving lives in developing countries might cause more harm than good in the short term because:

When people are saved from death, they consume animal products

The suffering of farm animals (especially chickens and farmed fish/shrimp) from being raised and killed outweighs the happiness of the human life saved

Using specific calculations, they estimate that one average person causes 15.5 times more animal suffering than human happiness globally (with higher ratios in some countries)

The author specifically criticizes two organizations:

GiveWell (for granting $1.09 billion without consid

Thanks for summarizing!

It strikes me that the above criticisms don't really seem consequentialist / hedonic utilitarian-focused. I'm curious if other criticisms are, or if some of these are intended to be as such (some complex logic like, "Acting in the standard-morality way will wind up being good for consequentialist reasons in some round-about way".)

More generally, those specific objections strike me as very weak. I'd expect and hope that people at Open Philanthropy and GiveWell would have better objections.

5

yanni kyriacos

No worries :)

I personally think this is a conversation worth having, but I can imagine a bunch of reasons people wouldn’t want to. For one thing, it is a PR nightmare!

I met Australia's Assistant Minister for Defence last Friday. I asked him to write an email to the Minister in charge of AI, asking him to establish an AI Safety Institute. He said he would. He also seemed on board with not having fully autonomous AI weaponry.

All because I sent one email asking for a meeting + had said meeting.

Advocacy might be the lowest hanging fruit in AI Safety.

Akash's Speaking to Congressional staffers about AI risk seems similar:

Like you, Akash just cold-emailed people:

There's a lot of concrete learnings in that writeup; definitely worth reading I think.

I don't know how to say this in a way that won't come off harsh, but I've had meetings with > 100 people in the last 6 months in AI Safety and I've been surprised how poor the industry standard is for people:

- Planning meetings (writing emails that communicate what the meeting will be about, it's purpose, my role) - Turning up to scheduled meetings (like literally, showing up at all) - Turning up on time - Turning up prepared (i.e. with informed opinions on what we're going to discuss) - Turning up with clear agendas (if they've organised the meeting) - Running the meetings - Following up after the meeting (clear communication about next steps)

I do not have an explanation for what is going on here but it concerns me about the movement interfacing with government and industry. My background is in industry and these just seem to be things people take for granted?

It is possible I give off a chill vibe, leading to people thinking these things aren't necessary, and they'd switch gears for more important meetings!

My 2 cents is that I think this is a really important observation (I think it's helpful) and I would be interested to see what other peoples feedback is on this front.

hypothesis that springs to mind, might or might not be useful for engaging with it productively. might be wrong depending on what class of people you have been having meetings with.

when you select for people working on AI risks, you select for people who are generally less respective of status quo and social norms. you select for the kind of person who will generally do something less, because of the reason that its a social norm. in order for this reference class of person to do a thing, they kind of who through their personal reasoning methods reaches the conclusion "It would be worth the effort for me to change myself to become the kind of person who shows up on time consistently, compared to other things I could be spending my effort on". they might just figure its a better use of their effort to think about their research all day.

I would guess that most people on this earth don't show up on time because they reasoned it through that this is a good idea, they do it because it has been drilled into them through social norms, and they valued those social norms higher.

(note: this comment is not intended to be an argument that showing up on time is a waste of time)

4

yanni kyriacos

It just occurred to me that maybe posting this isn't helpful. And maybe net negative. If I get enough feedback saying it is then I'll happily delete.

When AI Safety people are also vegetarians, vegans or reducetarian, I am pleasantly surprised, as this is one (of many possible) signals to me they're "in it" to prevent harm, rather than because it is interesting.

I am skeptical that exhibiting one of the least cost-effective behaviors for the purpose of reducing suffering would be correlated much with being "in it to prevent harm". Donating to animal charities, or being open to trade with other people about reducing animal suffering, or other things that are actually effective seem like much better indicator that people are actually in it to prevent harm.

My best guess is that most people who are vegetarians, vegans or reducetarians, and are actually interested in scope-sensitivity, are explicitly doing so for signaling and social coordination reasons (which to be clear, I think has a bunch going for it, but seems like the kind of contingent fact that makes it not that useful as a signal of "being in it to prevent harm").

Have you actually just talked to people about their motives for reducing their animal product consumption, or read about them? Do you expect them to tell you it's mostly for signaling or social coordination, or (knowingly or unknowingly) lie that it isn't?

I'd guess only a minority of EAs go veg mostly for signaling or social coordinaton.

Other reasons I find more plausibly common, even among those who make bigger decisons in scope-sensitive ways:

Finding it cost-effective in an absolute sense, e.g. >20 chickens spared per year, at small personal cost (usually ignoring opportunity costs to help others more). (And then maybe falsely thinking you should go veg to do this, rather than just cut out certain animal products, or finding it easier to just go veg than navigate exceptions.)

Wanting to minimize the harm they personally cause to others, or to avoid participating in overall harmful practices, or deontological or virtue ethics reasons, separately from actively helping others or utilitarian-ish reasons.

Not finding not eating animal products very costly, or not being very sensitive to the costs. It may be easy or take from motivational "budgets" psychologically separate from wo

I have had >30 conversations with EA vegetarians and vegans about their reasoning here. The people who thought about it the most seem to usually settle on it for signaling reasons. Maybe this changed over the last few years in EA, but it seemed to be where most people I talked to where at when I had lots of conversations with them in 2018.

I agree that many people say (1), but when you dig into it it seems clear that people incur costs that would be better spent on donations, and so I don't think it's good reasoning. As far as I can tell most people who think about it carefully seem to stop thinking its a good reason to be vegan/vegetarian.

I do think the self-signaling and signaling effects are potentially substantial.

I also think (4) is probably the most common reason, and I do think probably captures something important, but it seems like a bad inference that "someone is in it to prevent harm" if (4) is their reason for being vegetarian or vegan.

8

Fermi–Dirac Distribution

I’ve thought a lot about this, because I’m serious about budgeting and try to spend as little money as possible to make more room for investments and donations. I also have a stressful job and don’t like to spend time cooking. I did not find it hard to switch to being vegan while keeping my food budget the same and maintaining a high-protein diet.

Pea protein is comparable in cost per gram protein to the cheapest animal products where I live, and it requires no cooking. Canned beans are a bit more expensive but still very cheap and also require no cooking. Grains, ramen and cereal are very cheap sources of calories. Plant milks are more expensive than cow's milk, but still fit in my very low food budget. Necessary supplements like B12 are very cheap.

On a day-to-day basis, the only real cooking I do is things like pasta, which don’t take much time at all. I often go several weeks without doing any real cooking. I’d bet that I both spend substantially less money on food and substantially less time cooking than the vast majority of omnivores, while eating more protein.

As a vegan it's also easy to avoid spending money in frivolous ways, like on expensive ready-to-eat snacks and e.g. DoorDash orders.

I haven't had any health effects either, or any differences in how I feel day-to-day, after more than 1 year. Being vegan may have helped me maintain a lower weight after I dropped several pounds last year, but it's hard to know the counterfactual.

I didn't know coming in that being vegan would be easy; I decided to try it out for 1 month and then stuck with it when I "learned" how to do it. There's definitely a learning curve, but I'd say that for some people who get the hang of it, reason (1) in Michael's comment genuinely applies.

6

NunoSempere

I don't think one year is enough time to observe effects. Anecdotically, I think (but am not sure) that I started to have problems after three years of being a vegetarian.

4

Fermi–Dirac Distribution

What were your problems?

6

MichaelStJules

Do you mean financial costs, or all net costs together, including potentially through time, motivation, energy, cognition? I think it's reasonably likely that for many people, there are ~no real net (opportunity) costs, or that it's actually net good (but if net good in those ways, then that would probably be a better reason than 1). Putting my thoughts in a footnote, because they're long and might miss what you have in mind.[1]

Ya, that seems fair. If they had the option to just stop thinking and feeling bad about it and chose that over going veg, which is what my framing suggests they would do, then it seems the motivation is to feel better and get more time, not avoid harming animals through their diets. This would be like seeing someone in trouble, like a homeless person, and avoiding them to avoid thinking and feeling bad about them. This can be either selfish or instrumentally other-regarding, given opportunity costs.

If they thought (or felt!) the right response to the feelings is to just go veg and not to just stop thinking and feeling bad about it, then I would say they are in it to prevent harm, just guided by their feelings. And their feelings might not be very scope-sensitive, even if they make donation and career decisions in scope-sensitive ways. I think this is kind of what virtue ethics is about. Also potentially related: "Do the math, then burn the math and go with your gut".

1. ^

Financial/donations: It's not clear to me that my diet is more expensive than if I were omnivorous. Some things I've substituted for animal products are cheaper and others are more expensive. I haven't carefully worked through this, though. It's also not clear that if it were more expensive, that I would donate more, because of how I decide how much to donate, which is based on my income and a vague sense of my costs of living, which probably won't pick up differences due to diet (but maybe it does in expectation, and maybe it means donating less later, becaus

4

Habryka

I meant net costs all together, tough I agree that if you take into account motivation "net costs" becomes a kind of tricky concept, and many people can find it motivating, and that is important to think about, but also really doesn't fit nicely into a harm-reducing framework.

I mean, being an onmivore would allow you to choose between more options. Generally having more options very rarely hurts you.

Overall I like your comment.

8

MichaelStJules

Ya, I guess the value towards harm reduction would be more indirect/instrumental in this case.

I think this is true of idealized rational agents with fixed preferences, but I'm much less sure about actual people, who are motivated in ways they wouldn't endorse upon reflection and who aren't acting optimally impartially even if they think it would be better on reflection if they did.

By going veg, you eliminate or more easily resist the motivation to eat more expensive animal products that could have net impartial opportunity costs. Maybe skipping (expensive) meats hurts you in the moment (because you want meat), but it saves you money to donate to things you think matter more. You'd be less likely to correctly — by reflection on your impartial preferences — skip the meat and save the money if you weren't veg.

And some people are not even really open to (cheaper) plant-based options like beans and tofu, and that goes away going veg. That was the case for me. My attitude before going veg would have been irrational from an impartial perspective, just considering the $ costs that could be donated instead.

Of course, some people will endorse being inherently partial to themselves upon reflection, so eating animal products might seem fine to them even at greater cost. But the people inclined to cut out animal products by comparing their personal costs to the harms to animals probably wouldn't end up endorsing their selfish motivation to eat animal products over the harms to animals.

The other side is that a veg*n is more motivated to eat the more expensive plant-based substitutes and go to vegan restaurants, which (in my experience) tend to be more expensive.

I'm not inclined to judge how things will shake out based on idealized models of agents. I really don't know either way, and it will depend on the person. Cheap veg diets seem cheaper than cheap omni diets, but if people are eating enough plant-based meats, their food costs would probably increase.

Here are pr

4

MichaelStJules

I guess this is a bit pedantic, but you originally wrote "My best guess is that most people who are vegetarians, vegans or reducetarians, and are actually interested in scope-sensitivity, are explicitly doing so for signaling and social coordination reasons". I think veg EAs are generally "actually interested in scope-sensitivity", whether or not they're thinking about their diets correctly and in scope-sensitive ~utilitarian terms. "The people who thought about it the most" might not be representative, and more representative motivations might be better described as "in it to prevent harm", even if the motivations turn out to be not utilitarian, not appropriately scope-sensitive or misguided.

This is an old thread, but I'd like to confirm that a high fraction of my motivation for being vegan[1] is signaling to others and myself. (So, n=1 for this claim.) (A reasonable fraction of my motivation is more deontological.)

Happy to hear this from someone working on AI Safety.

Do you think EA/EA orgs should do something about it?

(Crypto has a similar problem. There are people genuinely interested in it but many people (most?) are just into it for the money)

2

yanni kyriacos

I actually think it is a huge risk because when the shit hits the fan I want to know people are optimising for the right thing. It is like the old saying; "it is only a value if it costs you something"

Anyway, I'd like to think that orgs suss this out in the interview stage.

People in EA are ridiculously helpful. I've been able to get a Careers Conference off the ground because people in other organisations are happy to help out in significant ways. In particular, Elliot Teperman and Bridget Loughhead from EA Australia, Greg Sadler from the Good Ancestors Project and Chris Leong. Several people have also volunteered to help at the Conference as well as on other tasks, such as running meetups and creating content.

In theory I'm running an organisation by myself (via an LTFF grant), but because it is nested within the EA/AIS space, I've got the support of a big network. Otherwise this whole project would be insane to start.

If someone is serious about doing Movement Building, but worries about doing it alone, this feels like important information.

AI Safety has less money, talent, political capital, tech and time. We have only one distinct advantage: support from the general public. We need to start working that advantage immediately.

[IMAGE] Extremely proud and excited to watch Greg Sadler (CEO of Good Ancestors Policy) and Soroush Pour (Co-Founder of Harmony Intelligence) speak to the Australian Federal Senate Select Committee on Artificial Intelligence. This is a big moment for the local movement. Also, check out who they ran after.

What is your AI Capabilities Red Line Personal Statement? It should read something like "when AI can do X in Y way, then I think we should be extremely worried / advocate for a Pause*".

I think it would be valuable if people started doing this; we can't feel when we're on an exponential, so its likely we will have powerful AI creep up on us.

@Greg_Colbourn just posted this and I have an intuition that people are going to read it and say "while it can do Y it still can't do X"

I think it is good to have some ratio of upvoted/agreed : downvotes/disagreed posts in your portfolio. I think if all of your posts are upvoted/high agreeance then you're either playing it too safe or you've eaten the culture without chewing first.

I think some kinds of content are uncontroversially good (e.g. posts that are largely informational rather than persuasive), so I think some people don't have a trade-off here.

I'm pretty confident that Marketing is in the top 1-3 skill bases for aspiring Community / Movement Builders.

When I say Marketing, I mean it in the broad sense it used to mean. In recent years "Marketing" = "Advertising", but I use the classic Four P's of Marketing to describe it.

The best places to get such a skill base is at FMCG / mass marketing organisations such as the below. Second best would be consulting firms (McKinsey & Company):

Procter & Gamble (P&G)

Unilever

Coca-Cola

Amazon

1. Product - What you're selling (goods or services) - Features and benefits - Quality, design, packaging - Brand name and reputation - Customer service and support

Another thing you can do is send comments proposed legislation on regulations.gov. I did so last week about a recent californian bill on open-sourcing model weights (now closed). In the checklist (screenshot below) they say: "the comment process is not a vote – one well supported comment is often more influential than a thousand form letters". There are people much more qualified on AI risk than I over here, so in case you didn't know, you might want to keep an eye on new regulation coming up. It doesn't take much time and seems to have a fairly big impact.

Big AIS news imo: “The initial members of the International Network of AI Safety Institutes are Australia, Canada, the European Union, France, Japan, Kenya, the Republic of Korea, Singapore, the United Kingdom, and the United States.”

Flaming hot take: I wonder if some EAs suffer from Scope Oversensitivity - essentially the inverse of the identifiable victim effect. Take the animal welfare vs global health debate: are we sometimes biased by the sheer magnitude of animal suffering numbers, rather than other relevant factors? Just as the identifiable victim effect leads people to overweight individual stories, maybe we're overweighting astronomical numbers.

EAs pride themselves on scope sensitivity to combat emotional biases, but taken to an extreme, could this create its own bias? Are we sometimes too seduced by bigger numbers = bigger problem? The meta-principle might be that any framework, even one designed to correct cognitive biases, needs wisdom and balance to avoid becoming its own kind of distortion.

Scope insensitivity has some empirical backing -- e.g. the helping birds study -- and some theorised mechanisms of action, e.g. people lacking intuitive understanding of large numbers.

Scope oversensitivity seems possible in theory, but I can't think of any similar empirical or theoretical reasons to think it's actually happening.

To the extent that you disagree, it's not clear to me whether it's because you and I disagree on how EAs weight things like animal suffering, or whether we disagree on how it ought to be weighted. Are you intending to cast doubt on the idea that a problem that is 100x as large is (all else equal) 100x more important, or are you intending to suggest that EAs treat it as more than 100x as important?

2

MichaelDickens

Upvoted and disagree-voted.

Do you have any particular reason to believe that EAs overweight large problems?

EAs donate much more to global health than to animal welfare. Do you think the ratio should be even higher still?

Another shower thought - I’ve been vegetarian for 7 years and vegan for 6.5.

I find it very surprising and interesting how little it has nudged anyone in my friends / family circle to reduce their consumption of meat.

I would go as far as to say it has made literally no difference!

Keep in mind I’m surrounded by extremely compassionate and mostly left leaning people.

I also have relatively wide networks and I normally talk about animal welfare in a non annoying way.

You’d have thought in that time one person would have been nudged - nope!

My intention going vegan wasn’t as some social statement, but if you’d asked me 7 years later would there have been some social effect, I would have guessed yes.

So your surprise/expectation seems reasonable! Of course, I don't know whether it's actually surprising, since presumably whether anyone actually converts depends on lots of other features of a given social network (do your networks contain a lot of people who were already vegetarian/vegan?).

Thanks for sharing that data David, I appreciate that :)

Do you think it can answer the question; If you're the first in your family to switch to veganism/vegetarianism, how likely is it that another family member will follow suit within X years?

(Also, no, there aren't any vegetarians or vegans among my friends or family).

FWIW I'd probably count myself as having ~ 30 close friends and family.

This also seems like good research for the GWWC pledge.

2

David_Moss

Thanks!

I'm afraid that. I think we'd need to know more about the respondents' families and the order in which they adopted veganism/vegetarianism to assess that. I agree that it sounds like an interesting research question!

2

yanni kyriacos

Maybe one day 😊

6

Sean Sweeney

Have you tried cooking your best vegan recipes for others? In my experience sometimes people ask for the recipe and make it for themselves later, especially health-conscious people. For instance, I really like this vegan pumpkin pie that's super easy to make: https://itdoesnttastelikechicken.com/easy-vegan-pumpkin-pie/

2

yanni kyriacos

Thanks for the comment Sean. Because of me they’ve had to (seemingly gladly) eaten vegan-only meals dozens of times. My mum has even made me vegan dishes to take home on dozens of occasions. They almost all acknowledge the horror of factory farming. Veganism is just firmly bucketed a “yanni thing”.

1[anonymous]

That is interesting.

I think that most beings[1] who I've become very close with (I estimate this reference class is ≤10) have gone vegan in a way that feels like it was because of our discussions, though it's possible they would have eventually otherwise, or that our discussions were just the last straw.

In retrospect, I wonder if I had first emanated a framing of taking morality seriously (such that "X is bad" -> "I will {not do X} or try to stop X"). I think I also tended to become that close with beings who do already, and who are more intelligent/willing to reflect than what seems normal.

1. ^

(I write 'being' because some are otherkin)

My previous take on writing to Politicians got numbers, so I figured I'd post the email I send below.

I am going to make some updates, but this is the latest version:

---

Hi [Politician]

My name is Yanni Kyriacos, I live in Coogee, just down the road from your electorate.

If you're up for it, I'd like to meet to discuss the risks posed by AI. In addition to my day job building startups, I do community / movement building in the AI Safety / AI existential risk space. You can learn more about AI Safety ANZ by joining our Facebook group here or the PauseAI movement here. I am also a signatory of Australians for AI Safety - a group that has called for the Australian government to set up an AI Commission (or similar body).

Recently I worked with Australian AI experts (such as Good Ancestors Policy) in making a submission to the recent safe and response AI consultation process. In the letter, we called on the government to acknowledge the potential catastrophic and existential risks from artificial intelligence. More on that can be found here.

There are many immediate risks from already existing AI systems like ChatGPT or Midjourney, such as disinformation or improper implementation in various ... (read more)

Thanks for sharing, Yanni, and it is really cool that you managed to get Australia's Assistant Minister for Defence interested in creating an AI Safety Institute!

Did you mean to include a link?

The Metaculus' question you link to involves meeting many conditions besides passing university exams:

[PHOTO] I sent 19 emails to politicians, had 4 meetings, and now I get emails like this. There is SO MUCH low hanging fruit in just doing this for 30 minutes a day (I would do it but my LTFF funding does not cover this). Someone should do this!

(Speaking as someone on LTFF, but not on behalf of LTFF)

How large of a constraint is this for you? I don't have strong opinions on whether this work is better than what you're funded to do, but usually I think it's bad if LTFF funding causes people to do things that they think is less (positively) impactful!

We probably can't fund people to do things that are lobbying or lobbying-adjacent, but I'm keen to figure out or otherwise brainstorm an arrangement that works for you.

1

yanni kyriacos

Hey Linch, thanks for reaching out! Maybe send me your email or HMU here yannikyriacos@gmail.com

4

huw

(I think you blanked out their name from their email footer but not from the bottom of the email, but not sure how anonymous you wanted to keep them.)

Larry Ellison, who will invest tens of billions in Stargate said uberveillance via AGI will be great because then police and the populace would always have to be on their best behaviour. It is best to assume the people pushing 8 billion of us into the singularity have psychopathy (or similar disorders). This matters because we need to know who we're going up against: there is no rationalising with these people. They aren't counting the QALYs!

Footage of Larry’s point of view starts around 12.00 on Matt Wolf’s video

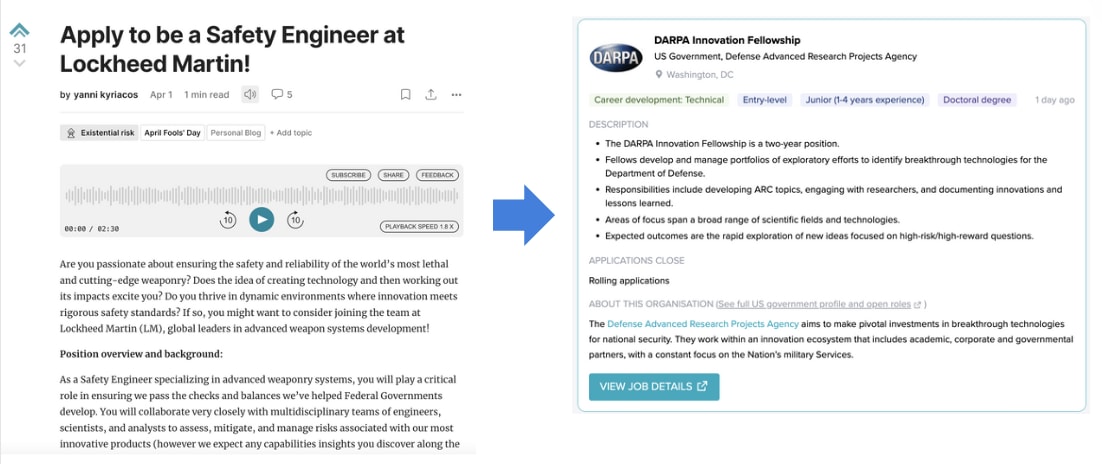

RIP to any posts on anything earnest over the last 48 hours. Maybe in future we don't tag anything April Fools and it is otherwise a complete blackout on serious posts 😅

I think the hilarity is in the confusion / click bait. Your idea would rob us of this! I think the best course of action is for anyone with a serious post to wait until April 3 :|

2

Toby Tremlett🔹

Not a solution to everything mentioned here- but a reminder that you can click "customize feed" at the top of the page and remove all posts tagged april fools.

1

yanni kyriacos

nah let's lean all the way in, for one day a year, the wild west out here.

1

yanni kyriacos

Damn just had the idea of a "Who wants to be Fired?" post.

Ten months ago I met Australia's Assistant Defence Minister about AI Safety because I sent him one email asking for a meeting. I wrote about that here. In total I sent 21 emails to Politicians and had 4 meetings. AFAICT there is still no organisation with significant funding that does this as their primary activity. AI Safety advocacy is IMO still extremely low hanging fruit. My best theory is EAs don't want to do it / fund it because EAs are drawn to spreadsheets and google docs (it isn't their comparative advantage). Hammers like nails etc.

I also think many EAs are still allergic to direct political advocacy, and that this tendency is stronger in more rationalist-ish cause areas such as AI. We shouldn’t forget Yudkowsky’s “politics is the mind-killer”!

6

yanni kyriacos

What a cop-out! Politics is a mind-killer if you're incapable of observing your mind.

I think if you work in AI Safety (or want to) it is very important to be extremely skeptical of your motivations for working in the space. This applies to being skepticism of interventions within AI Safety as well.

For example, EAs (like most people!) are motivated to do things they're (1) good at (2) see as high status (i.e. people very quietly ask themselves 'would someone who I perceive as high status approve of my belief or action?'). Based on this, I am worried that (1) many EAs find protesting AI labs (and advocating for a Pause in general) cringy and/or awkward (2) Ignore the potential impact of organisations such as PauseAI.

We might all literally die soon because of misaligned AI, so what I'm recommending is that anyone seriously considering AI Safety as a career path spends a lot of time on the question of 'what is really motivating me here?'

fwiw i think this works in both directions - people who are "action" focussed probably have a bias towards advocacy / protesting and underweight the usefulness of research.

One axis where Capabilities and Safety people pull apart the most, with high consequences is on "asking for forgiveness instead of permission."

1) Safety people need to get out there and start making stuff without their high prestige ally nodding first 2) Capabilities people need to consider more seriously that they're building something many people simply do not want

[GIF] A feature I'd love on the forum: while posts are read back to you, the part of the text that is being read is highlighted. This exists on Naturalreaders.com and would love to see it here (great for people who have wandering minds like me)

I agree with you, and so does our issue tracker. Sadly, it does seem a bit hard. Tagging @peterhartree as a person who might be able to tell me that it's less hard than I think.

4

yanni kyriacos

As someone who works with software engineers, I have respect for how simple-appearing things can actually be technically challenging.

2

Lorenzo Buonanno🔸

For what it's worth, I would find the first part of the issue (i.e. making the player "floating" or "sticky") already quite useful, and it seems much easier to implement.

I have written 7 emails to 7 Politicians aiming to meet them to discuss AI Safety, and already have 2 meetings.

Normally, I'd put this kind of post on twitter, but I'm not on twitter, so it is here instead.

I just want people to know that if they're worried about AI Safety, believe more government engagement is a good thing and can hold a decent conversation (i.e. you understand the issue and are a good verbal/written communicator), then this could be an underrated path to high impact.

Another thing that is great about it is you can choose how many emails to send and how many meetings to have. So it can be done on the side of a "day job".

Is anyone in the AI Governance-Comms space working on what public outreach should look like if lots of jobs start getting automated in < 3 years?

I point to Travel Agents a lot not to pick on them, but because they're salient and there are lots of them. I think there is a reasonable chance in 3 years that industry loses 50% of its workers (3 million globally).

People are going to start freaking out about this. Which means we're in "December 2019" all over again, and we all remember how bad Government Comms were during COVID.

For the past 8 months, we've (AIS ANZ) been running consistent community meetups across 5 cities (Sydney, Melbourne, Brisbane, Wellington and Canberra). Each meetup averages about 10 attendees with about 50% new participant rate, driven primarily through LinkedIn and email outreach. I estimate we're driving unique AI Safety related connections for around $6.

Volunteer Meetup Coordinators organise the bookings, pay for the Food & Beverage (I reimburse them after the fact) and greet attendees. This ini... (read more)

Solving the AGI alignment problem demands a herculean level of ambition, far beyond what we're currently bringing to bear. Dear Reader, grab a pen or open a google doc right now and answer these questions:

1)What would you do right now if you became 5x more ambitious? 2) If you believe we all might die soon, why aren't you doing the ambitious thing?

I think acting on the margins is still very underrated. For e.g. I think 5x the amount of advocacy for a Pause on capabilities development of frontier AI models would be great. I also think in 12 months time it would be fine for me to reevaluate this take and say something like 'ok that's enough Pause advocacy'.

Basically, you shouldn't feel 'locked in' to any view. And if you're starting to feel like you're part of a tribe, then that could be a bad sign you've been psychographically locked in.

I believe we should worry about the pathology of people like Sam Altman, mostly because of what they're trying to achieve, without the consent of all governments and their respective populace. I found a tweet that summarises my own view better than I can. The pursuit of AGI isn't normal or acceptable and we should call it out as such.

Yesterday Greg Sadler and I met with the President of the Australian Association of Voice Actors. Like us, they've been lobbying for more and better AI regulation from government. I was surprised how much overlap we had in concerns and potential solutions: 1. Transparency and explainability of AI model data use (concern)

2. Importance of interpretability (solution)

3. Mis/dis information from deepfakes (concern)

4. Lack of liability for the creators of AI if any harms eventuate (concern + solution)

5. Unemployment without safety nets for Australians (concern)

6. Rate of capabilities development (concern)

They may even support the creation of an AI Safety Institute in Australia. Don't underestimate who could be allies moving forward!

NotebookLM is basically magic. Just take whatever Forum post you can't be bothered reading but know you should and use NotebookLM to convert it into a podcast.

It seems reasonable that in 6 - 12 months there will be a button inside each Forum post that converts said post into a podcast (i.e. you won't need to visit NotebookLM to do it).

Remember: EA institutions actively push talented people into the companies making the world changing tech the public have said THEY DONT WANT. This is where the next big EA PR crisis will come from (50%). Except this time it won’t just be the tech bubble.

Is this about the safety teams at capabilities labs?

If so, I consider it a non-obvious issue, whether pushing a talented people into an AI safety role at, e.g., DeepMind is a bad thing. If you think that is a bad thing, consider providing a more detailed argument, and writing a top-level post explaining your view.

If, instead, this is about EA institutions pushing people into capabilities roles, consider naming these concrete examples. As an example, 80k has a job advertising a role as a prompt engineer at Scale AI. That does not seem to be a very safety-focused role, and it is not clear how 80k wants to help prevent human extinction with that job ad.

The general public wants frontier AI models regulated and there doesn't seem to be grassroots focussed orgs attempting to capture and funnel this energy into influencing politicians. E.g. via this kind of activity. This seems like massively low hanging fruit. An example of an organisation that does this (but for GH&W) is Results Australia. Someone should set up such an org.

My impression is that PauseAI focusses more on media engagement + protests, which I consider a good but separate thing. Results Australia, as an example, focuses (almost exclusively) on having concerned citizens interacting directly with politicians. Maybe it would be a good thing for orgs to focus on separate things (e.g. for reasons of perception + specialisation). I lean in this direction but remain pretty unsure.

5

joepio

Founder of PauseAI here. I know our protests are the most visible, but they are actually a small portion of what we do. People always talk about the protests, but I think we actually had most results through invisible volunteer lobbying. Personally, I've spent way more time sending emails to politicians and journalists, meeting them and informing them of the issues. I wrote an Email Builder to get volunteers to write to their representatives, gave multiple workshops on doing so, and have seen many people (including you of course!) take action and ask for feedback in the discord.

I think combining both protesting and volunteer lobbying in one org is very powerful. It's not a new idea of course - orgs like GreenPeace have been using this strategy for decades. The protests create visibility and attention, and the lobbying gives the important people the right background information. The protests encourage more volunteers to join and help out, so we get more volunteer lobbyists! In my experience the protests also help with getting an in with politicians - it creates a recognizable brand that shows you represent a larger group.

So I did a quick check today - I've sent 19 emails to politicians about AI Safety / x-risk and received 4 meetings. They've all had a really good vibe, and I've managed to get each of them to commit to something small (e.g. email XYZ person about setting up an AI Safety Institute). I'm pretty happy with the hit rate (4/19). I might do another forum quick take once I've sent 50.

I expect (~ 75%) that the decision to "funnel" EAs into jobs at AI labs will become a contentious community issue in the next year. I think that over time more people will think it is a bad idea. This may have PR and funding consequences too.

My understanding is that this has been a contentious issue for many years already.

80,000 hours wrote a response to this last year, Scott Alexander had written about it in 2022, and Anthropic split from OpenAI in 2021. Do you mean that you expect this to become significantly more salient in the next year?

1

yanni kyriacos

Thanks for the reply Lorenzo! IMO it is going to look VERY weird seeing people continue to leave labs while EA fills the leaky bucket.

1

yanni kyriacos

Yeah my prediction lacked specificity. I expect it to become quite heated. I'm imagining 10+ posts on the topic next year with a total of 100+ comments. That's just on the forum.

4

OllieBase

I'd probably bet against this happening FWIW. Maybe a Manifold market?

Also, 100+ comments on the forum might not mean it's necessarily "heated"—a back and forth between two commenters can quickly increase the tally on any subject so that part might also need specifying further.

1

yanni kyriacos

I spent about 30 seconds thinking about how to quantify my prediction. I'm trying to point at something vague in a concrete way but failing. This also means that I don't think it is worth my time making it more concrete. The initial post was more of the "I am pretty confident this will be a community issue, just a heads up".

Possibly a high effort low reward suggestion for the forum team but I’d love (with a single click) to be able to listen to forum posts as a podcast via google’s notebookLM. I think this could increase my content consumption of long form posts by about 2x.

I often see EA orgs looking to hire people to do Z, where applicants don't necessarily need experience doing Z to be successful.

E.g. instead of saying "must have minimum 4 years as an Operations Manager", they say "we are hiring for an Operations Manager. But you don't need experience as an Operations Manager, as long as you have competencies/skills (insert list) A - F."

This reminds of when Bloomberg spent over $10M training a GPT-3.5 class AI on their own financial data, only to find that GPT-4 beat it on almost all finance tasks.

If someone claims to have competencies A-F required to be an operation manager, they should have some way of proving that they actually have competencies A-F. A great way for them to prove this is to have 4 years of experience as Operations Manager. In fact, I struggle to think of a better way to prove this, and obviously a person with experience is preferable to someone with no experience for all kind of reasons (they have encountered all the little errors, etc). As a bonus, somebody outside of your personal bubble has trusted this person.

EA should be relying on more subject matter experience, not less.

I beta tested a new movement building format last night: online networking. It seems to have legs.

V quick theory of change: > problem it solves: not enough people in AIS across Australia (especially) and New Zealand are meeting each other (this is bad for the movement and people's impact). > we need to brute force serendipity to create collabs. > this initiative has v low cost

quantitativeresults: > I purposefully didn't market it hard because it was a beta. I literally got more people that I hoped for > 22 RSVPs and 18 attendees > this s... (read more)

A piece of career advice I've given a few times recently to people in AI Safety, which I thought worth repeating here, is that AI Safety is so nascent a field that the following strategy could be worth pursuing:

1. Write your own job description (whatever it is that you're good at / brings joy into your life).

2. Find organisations that you think need thing that job but don't yet have it. This role should solve a problem they either don't know they have or haven't figured out how to solve.

3. Find the key decision maker and email them. Explain the (their) pro... (read more)

If transformative AI is defined by its societal impact rather than its technical capabilities (i.e. TAI as process not a technology), we already have what is needed. The real question isn't about waiting for GPT-X or capability Y - it's about imagining what happens when current AI is deployed 1000x more widely in just a few years. This presents EXTREMELY different problems to solve from a governance and advocacy perspective.

E.g. 1: compute governance might no longer be a good intervention E.g. 2: "Pause" can't just be about pausing model development. It should also be about pausing implementation across use cases

It breaks my heart when I see eulogy posts on the forum. And while I greatly appreciate people going to the effort of writing them (while presumably experiencing grief), it still doesn't feel like enough. We're talking about people that dedicated their lives to doing good, and all they get is a post. I don't have a suggestion to address this 'problem', and some may even feel that a post is enough, but I don't. Maybe there is no good answer and death just sucks. I dunno.

To me, your examples at the micro level don't make the case that you can't solve a problem at the level it's created. I'm agnostic as to whether CBT or meta-cognitive therapy is better for intrusive thoughts, but lots of people like CBT; and as for 'doing the dishes', in my household we did solve the problem of conflicts around chores by making a spreadsheet. And to the extent that working on communication styles is helpful, that's because people (I'd claim) have a problem at the level of communication styles.

I am 90% sure that most AI Safety talent aren't thinking hard enough about what Neglectedness. The industry is so nascent that you could look at 10 analogous industries, see what processes or institutions are valuable and missing and build an organisation around the highest impact one.

The highest impact job ≠ the highest impact opportunity for you!

I feel like you're making this sound simple when I'd expect questions like this to involve quite a bit of work, and potentially skills and expertise that I wouldn't expect people at the start of their careers to have yet.

Do you have any specific ideas for something that seems obviously missing to you?

AI Safety (in the broadest possible sense, i.e. including ethics & bias) is going be taken very seriously soon by Government decision makers in many countries. But without high quality talent staying in their home countries (i.e. not moving to UK or US), there is a reasonable chance that x/c-risk won’t be considered problems worth trying to solve. X/c-risk sympathisers need seats at the table. IMO local AIS movement builders should be thinking hard about how to either keep talent local (if they're experiencing brain drain) OR increase the amount of local talent coming into x/c-risk Safety, such that outgoing talent leakage isn't a problem.

What types of influence do you think governments from small, low influence countries will be able to have?

For example, the NZ government - aren't they price-takers when it comes to AI regulation? If you're not a significant player, don't have significant resources to commit to the problem, and don't have any national GenAI companies - how will they influence the development trajectory of AI?

1[anonymous]

That's one way, but countries's (and other body's) concerns are made up of both their citizens/participants concerns and other people's concerns. one is valued differently from the other, but there's other ways of keeping relevant actors away from not thinking its a problem. (namely, utilizing the UN.) you make a good point though.

My long thoughts:

1. 80k don't claim to only advertise impactful jobs

They also advertise jobs that help build career impact, and they're not against posting jobs that cause harm (and it's often/always not clear which is which). See more in this post.

They sometimes add features like marking "recommended orgs" (which I endorse!), and sometimes remove those features ( 😿 ).

2. 80k's career guide about working at AI labs doesn't dive into "which lab"

See here. Relevant text:

I think [link to comment] the "which lab" question is really important, and I'd encourage 80k to either be opinionated about it, or at least help people make up their mind somehow, not just leave people hanging on "which lab" while also often recommending people go work at AI labs, and also mentioning that often that work is net-negative and recommending reducing the risk by not working "in certain positions unless you feel awesome about the lab".

[I have longer thoughts on how they could do this, but my main point is that it's (imo) an important hole in their recommendation that might be hidden from many readers]

3. Counterfactual / With great power comes great responsibility

If 80k wouldn't do all this, should we assume there would be no job board and no guides?

I claim that something like a job board has critical mass: Candidates know the best orgs are there, and orgs know the best candidates are there.

Once there's a job board with critical mass, it's not trivial to "compete" with it.

But EAs love opening job boards. A few new EA job boards pop up every year. I do think there would be an alternative. And so the question seems to me to be - how well are 80k using their critical mass?

4. What results did 80k's work actually cause?

First of all: I don't actually know, and if someone from 80k would respond, that would be way better than my guess.

Still, here's my guess, which I think would be better than just responding to the poll:

* Lots of engineers who care about AI Safet

3

Guy Raveh

And there's always the other option that I (unpopularly) believe in - that better publicly available AI capabilities are necessary for meaningful safety research, thus AI labs have contributed positively to the field.

Three million people are employed by the travel (agent) industry worldwide. I am struggling to see how we don't lose 80%+ of those jobs to AI Agents in 3 years (this is ofc just one example). This is going to be an extremely painful process for a lot of people.

I think the greater threat to travel agents was the rise of self-planning travel internet sites like skyscanner and booking.com which make them mostly unnecessary. If travel agents have survived that, I don't see how they wouldn't survive LLM's: presumably one of the reasons they survived is the preference for talking in person to a human being.

5

huw

Yep, I know people who even opt against self-checkout because they enjoy things like a sense of community! I don't claim AI isn't going to greatly disrupt a number of jobs, but service jobs in particular seem like some of the most resistant, especially if people get wealthier from AI and have more money to spend on niceties.

-1

yanni kyriacos

Avatars are going to be extremely high fidelity. It will feel like a zoom call with a colleague, except you’re booking travel.

I'm not sure it going to feel like a zoom call with a colleague any time soon. That's a pretty high bar IMO that we ain't anywhere near yet. Many steps aren't yet there which include

(I'm probably misrepresenting a couple of these due to lack of expertise but something like...)

1) Video quality (especially being rendered real time) 2) Almost insta-replying 3) Facial warmth and expressions 4) LLM sounding exactly like a real person (this one might be closest) 5) Reduction in processing power required for this to be a norm. Having thousands of these going simultaneously is going to need a lot. (again less important)

I would bet against Avatars being this high fidelity in 3 years a in common use because I think LLM progress is tailing off and there are multiple problems to be solved to get there - but maybe I'm a troglodyte...

That would not contribute to my friends’ sense of community or trust in other people (or, for that matter, the confidence that if a real service person gives you a bad experience or advice, that you have a path to escalate it)

This is an extremely "EA" request from me but I feel like we need a word for people (i.e. me) who are Vegans but will eat animal products if they're about to be thrown out. OpportuVegan? UtilaVegan?

I think the term I've heard (from non-EAs) is 'freegan' (they'll eat it if it didn't cause more animal products to be purchased!)

1

yanni kyriacos

This seems close enough that I might co-opt it :)

https://en.wikipedia.org/wiki/Freeganism

3

Toby Tremlett🔹

If you predictably do this, you raise the odds that people around you will cook some/ buy some extra food so that it will be "thrown out", or offer you food they haven't quite finished (and that they'll replace with a snack later.

So I'd recommend going with "Vegan" as your label, for practical as well as signalling reasons.

3

yanni kyriacos

Yeah this is a good point, which I've considered, which is why I basically only do it at home.

I think https://www.wakingup.com/ should be considered for effective organisation status. It donates 10% of revenue to the most effective causes and I think reaching nondual states of awakening could be one of the most effective ways for people in rich countries to improve their wellbeing.

Also related (though more tangentially): https://podcast.clearerthinking.org/episode/167/michael-taft-and-jeremy-stevenson-glimpses-of-enlightenment-through-nondual-meditation/

This brief post might contain many relevant points: https://magnusvinding.com/2020/10/03/underappreciated-consequentialist-reasons/

2

yanni kyriacos

thanks!

1

Pat Myron 🔸

Here's a list of non-animal welfare concerns about animal farming: https://forum.effectivealtruism.org/posts/rpBYejrhk7HQB6gJj/crispr-for-happier-farm-animals?commentId=hvCQ9kBvutrnkFm9h

Imagine running 100 simulations of humanity's story. In every single one, the same pattern emerges: The moment we choose agriculture over hunting and gathering, we unknowingly start a countdown to our own extinction through AGI. If this were true, I think it suggests that our best chance at long-term survival is to stay as hunter-gatherers - and that what we call 'progress' is actually a kind of cosmic trap.

What makes the agriculture transition stand out as the "point of no return"? Agriculture was independently invented several times, so I'd expect the hunter-gatherer -> agriculture transition to be more convergent than agriculture -> AGI.

2

yanni kyriacos

I mean, I'm mostly riffing here, but according to Claude: "Agriculture independently developed in approximately 7-12 major centers around the world."

If we ran the simulation 100 times, would AGI appear in > 7-12 centres around the world? Maybe, I dunno.

Anyway, Happy Friday det!

Two jobs in AI Safety Advocacy that AFAICT don't exist, but should and probably will very soon. Will EAs be the first to create them though? There is a strong first mover advantage waiting for someone -

1. Volunteer Coordinator - there will soon be a groundswell from the general population wanting to have a positive impact in AI. Most won't know how to. A volunteer manager will help capture and direct their efforts positively, for example, by having them write emails to politicians

2. Partnerships Manager - the President of the Voice Actors guild reached out... (read more)

More thoughts on "80,000 hours should remove OpenAI from the Job Board"

- - - -

A broadly robust heuristic I walk around with in my head is "the more targeted the strategy, the more likely it will miss the target".

Its origin is from when I worked in advertising agencies, where TV ads for car brands all looked the same because they needed to reach millions of people, while email campaigns were highly personalised.

The thing is, if you get hit with a tv ad for a car, worst case scenario it can't have a strongly negative effect because of its generic... (read more)

Thanks Yanni, I think a lot of people have been concerned about this kind of thing.

I would be surprised if 80,000 hours isn't already tracking this or something like it - perhaps try reaching out to them directly, you might get a better response that way

I always get downvoted when I suggest that (1) if you have short timelines and (2) you decide to work at a Lab then (3) people might hate you soon and your employment prospects could be damaged.

What is something obvious I'm missing here?

One thing I won't find convincing is someone pointing at the Finance industry post GFC as a comparison.

I believe the scale of unemployment could be much higher. E.g. 5% ->15% unemployment in 3 years.

If you have short timelines, I feel like your employment prospects in a fairly specific hypothetical should be fairly low on your priority list compared to ensuring AI goes well? Further, if you have short timelines, knowing about AI seems likely to be highly employable and lab experience will be really useful (until AI can do it better than us...)

I can buy "you'll be really unpopular by affiliation and this will be bad for your personal life" as a more plausible argument

2

yanni kyriacos

Hi! Some thoughts:

"If you have short timelines, I feel like your employment prospects in a fairly specific hypothetical should be fairly low on your priority list compared to ensuring AI goes well?"

- Possibly! I'm mostly suggesting that people should be aware of this possibility. What they do with that info is obvs up to them

"if you have short timelines, knowing about AI seems likely to be highly employable and lab experience will be really useful"

- The people I'm pointing to here (e.g. you) will be employable and useful. Maybe we're talking passed each other a bit?

5

Neel Nanda

Why do you believe this? Being an expert in AI is currently very employable, and seems all the more so if AI is automating substantial parts of the economy

2

yanni kyriacos

Ugh I wrote the wrong thing here, my bad (will update comment).

Should have said

"- The people I'm pointing to here (e.g. you) will be employable and useful. Maybe we're talking passed each other a bit?"

3

Neel Nanda

I'm very confused. Your top level post says "your employment prospects could be damaged." I am explaining why I think that the skillset of lab employees will be way more employable. Is your argument that the PR will outweigh that, even though it's a major factor?

5

Larks

Financial workers' careers are not directly affected very much by populist prejudice because 1) they provide a useful service and ultimately people pay for services and 2) they are employed by financial firms who do not share that prejudice. Likewise in short timeline worlds AI companies are probably providing a lot of services to the world, allowing them to pay high compensation, and AI engineers would have little reason to want to work elsewhere. So even if they are broadly disliked (which I doubt) they could still have very lucrative careers.

Obviously its a different story if antipathy towards the financial sector gets large enough to lead to a communist takeover.

During the financial crisis U-3 rose from 5% to 10% over about 2 years, suggesting on this metric at least you should think it is at least comparable (2.5%/year vs 3.3%/year).

4

huw

Just on that last prediction—that's an extraordinary claim given that the U.S. unemployment rate has only increased 0.7% since ChatGPT was released nearly 2 years ago (harder to compare again GPT-3, which was released in 2020, but you could say the same about the economic impacts of recent LLMs more broadly). If you believe we're on the precipice of some extraordinary increase, why stop at 15%? Or if we're not on that precipice, what's the mechanism for that 10% jump?

2

yanni kyriacos

Heya mate, it is an extraordinary claim, depending on how powerful you expect the models to be and in what timeframe!

"U.S. unemployment rate has only increased 0.7% since ChatGPT was released nearly 2 years ago"

"what's the mechanism for that 10% jump?"

"Pens Down" to mean 'Artificial Super Intelligence in my opinion is close enough that it no longer makes sense to work on whatever else I'm currently working on, because we're about to undergo radical amounts of change very soon/quickly'.

For me, it is probably when we have something as powerful as GPT-4 except it is agentic and costs less than $100 / month. So, that looks like a digital personal assistant that can execute an instruction like "have a TV delivered for me by X date, under Y price and organise installation and wall mounting."

This is obviously a question mainly for people who don't work full time on AI Safety.

I don't know if this can be answered in full-generality.

I suppose it comes down to things like:

• Financial runway/back-up plans in case your prediction is wrong

• Importance of what you're doing now

• Potential for impact in AI safety

1

yanni kyriacos

I agree. I think it could be a useful exercise though to make the whole thing (ASI) less abstract.

I find it hard to reconcile that (1) I think we're going to have AGI soon and (2) I haven't made more significant life changes.

I don't buy the argument that much shouldn't change (at least, in my life).

2

Chris Leong

Happy to talk that through if you'd like, though I'm kind of biased, so probably better to speak to someone who doesn't have a horse in the race.

1

yanni kyriacos

I suppose it is plausible for a person to never have a "Pens Down" moment if;

1. There is a FOOM

2. They feel they won't be able to positively contribute to making ASI safe / slowing it down

1

yanni kyriacos

I'm somewhat worried we're only 2-3 years from this, FWIW. I'd give it a ~ 25% chance.

I'm pretty confident that a majority of the population will soon have very negative attitudes towards big AI labs. I'm extremely unsure about what impact this will have on the AI Safety and EA communities (because we work with those labs in all sorts of ways). I think this could increase the likelihood of "Ethics" advocates becoming much more popular, but I don't know if this necessarily increases catastrophic or existential risks.

Anecdotally I feel like people generally have very low opinion of and understanding of the finance sector, and the finance sector mostly doesn't seem to mind. (There are times when there's a noisy collision, e.g. Melvin Capital vs. GME, but they're probably overall pretty rare.)

It's possible / likely that AI and tech companies targeting mass audiences with their product are more dependent on positive public perception, but I suspect that the effect of being broadly hated is less strong than you'd think.

2

yanni kyriacos

I think the amount of AI caused turmoil I’m expecting in 3-5 years might be much higher than you, which accounts for most of this difference.

6

titotal

The public already has a negative attitude towards the tech sector before the AI buzz. in 2021 45% of americans had a somewhat or very negative view of tech companies.

I doubt the prevalence of AI is making people more positive towards the sector given all the negative publicity over plagarism, job loss, and so on. So I would guess the public already dislikes AI companies (even if they use their products), and this will probably increase.

2

yanni kyriacos

Yeah the problem with some surveys is they measure prompted attitudes rather than salient ones.

3

anormative

Can you elaborate on what makes you so certain about this? Do you think that the reputation will be more like that of Facebook or that of Big Tobacco? Or will it be totally different?

2

yanni kyriacos

Basically, I think there is a good chance we have 15% unemployment rates in less than two years caused primarily by digital agents.

2

yanni kyriacos

Totally different. I had a call with a voice actor who has colleagues hearing their voices online without remuneration. Tip of the iceberg stuff.

The Greg / Vasco bet reminded me of something: I went to buy a ceiling fan with a light in it recently. There was one on sale that happened to also tick all my boxes, joy! But the salesperson warned me "the light in this fan can't be replaced and only has 10,000 hours in it. After that you'll need a new fan. So you might not want to buy this one." I chuckled internally and bought two of them, one for each room.

Has anyone seen an analysis that takes seriously the idea that people should eat some fruits, vegetables and legumes over others based on how much animal suffering they each cause?

I.e. don't eat X fruit, eat Y one instead, because X fruit is [e.g.] harvested in Z way, which kills more [insert plausibly sentient creature].

A judgement I'm attached to is that a person is either extremely confused or callous if they work in capabilities at a big lab. Is there some nuance I'm missing here?

Some of them have low p(doom from AI) and aren't longtermists, which justifies the high-level decision of working on an existentially dangerous technology with sufficiently large benefits.

I do think their actual specific actions are not commensurate with the level of importance or moral seriousness that they claim to attach to their work, given those stated motivations.

1

yanni kyriacos

Thanks for the comment Linch! Just to spell this out:

"Some of them have low p(doom from AI) and aren't longtermists, which justifies the high-level decision of working on an existentially dangerous technology with sufficiently large benefits. "

* I would consider this acceptable if their p(doom) was ~ < 0.01%

* I find this pretty incredulous TBH

"I do think their actual specific actions are not commensurate with the level of importance or moral seriousness that they claim to attach to their work, given those stated motivations."

* I'm a bit confused by this part. Are you saying you believe the importance / seriousness the person claims their work has is not reflected in the actions they actually take? In what way are you saying they do this?

4

Linch

I dunno man, at least some people think that AGI is the best path to curing cancer. That seems like a big deal! If you aren't a longtermist at all, speeding up the cure for cancer by a year is probably worth quite a bit of x-risk.

Yes.

Lab people shitpost, lab people take competition extremely seriously (if your actual objective is "curing cancer," it seems a bit discordant to be that worried that Silicon Valley Startup #2 will cure cancer before you), people take infosec not at all seriously, the bizarre cultish behavior after the Sam Altman firing, and so forth.

1

Closed Limelike Curves

In my experience, they're mostly just impulsive and have already written their bottom line ("I want to work on AI projects that look cool to me"), and after that they come up with excuses to justify this to themselves.

1

yanni kyriacos

Hi! Thanks for your comment :)

I basically file this under "confused".

2

Linch

Interesting, in your ontology I'd much more straightforwardly characterize it as "callous"

1

yanni kyriacos

Sorry, I will explain further for clarity. I am Buddhist and in that philosophy the phrase "confused" has an esoteric usage. E.g. "that person suffers because they're confused about the nature of reality". I think this lab person is confused about how much suffering they're going to create for themselves and others (i.e. it hasn't been seen or internalised).

1

Dicentra

Yes. I think most people working on capabilities at leading labs are confused or callous (or something similar, like greedy or delusional), but definitely not all. And personally, I very much hope there are many safety-concerned people working on capabilities at big labs, and am concerned about the most safety-concerned people feeling the most pressure to leave, leading to evaporative cooling.

Reasons to work on capabilities at a large lab:

* To build career capital of the kind that will allow you to have a positive impact later. E.g. to be offered relevant positions in government

* To positively influence the culture of capabilities teams or leadership at labs.

* To be willing and able to whistleblow bad situations (e.g. seeing emerging dangerous capabilities in new models, the non-disparagement stuff).

* [maybe] to earn to give (especially if you don't think you're contributing to core capabilities)

To be clear, I expect achieving the above to be infeasible for most people, and it's important for people to not delude themselves into thinking they're having a positive impact to keep enjoying a lucrative, exciting job. But I definitely think there are people for whom the above is feasible and extremely important.

Another way to phrase the question is "is it good for all safety-concerned people to shun capabilities teams, given (as seems to be the case) that those teams will continue to exist and make progress by default?" And for me the strong answer is "yes". Which is totally consistent with wanting labs to pause and thinking that just contributing to capabilities (on frontier models) in expectation is extremely destructive.

1

yanni kyriacos

Thanks so much for your thoughtful comment! I appreciate someone engaging with me on this rather than just disagree ticking. Some thoughts:

1. Build Career Capital for Later Impact:

* I think this depends somewhat on what your AGI timelines are. If they're short, you've wasted your time and possibly had a net-negative effect.

* I think there is a massive risk of people entering an AGI lab for career capital building reasons, and then post rationalising their decision to stay. Action changes attitudes faster than attitude changes actions after all.

2. Influence the Culture:

* Multiple board members attempted this at OpenAI got fired, what chance does a single lower level employee?

* I have tried changing corporate culture at multiple organisations and it is somewhere between extremely hard and impossible (more on that below).

3. Be Prepared to Whistle-Blow:

* I was a Whistle-Blower during my time at News Corp. It was extremely difficult. I simply do not expect a meaningful number of people to be able to do this. You have to be willing to turn your life upside down.

* This can be terrible for a person's mental health. We shouldn't be vary careful openly recommending this as a reason to stay at a Lab.

* As mentioned above, I expect a greater number of people to turn into converts than would-be Whistle-Blowers

* I think if you're the kind of person who goes into a Lab for Career Capital that makes you less likely to be a Whistle-Blower TBH.

4. Be Prepared to Whistle-Blow:

* Sorry, you can make good money elsewhere.

1

Dicentra

(1) I agree if your timelines are super short, like <2yrs, it's probably not worth it. I have a bunch of probability mass on longer timelines, though some on really short ones

Re (2), my sense is some employees already have had some of this effect (and many don't. But some do). I think board members are terrible candidates for changing org culture; they have unrelated full-time jobs, they don't work from the office, they have different backgrounds, most people don't have cause to interact with them much. People who are full-time, work together with people all day every day, know the context, etc., seem more likely to be effective at this (and indeed, I think they have been, to some extent in some cases)

Re (3), seems like a bunch of OAI people have blown the whistle on bad behavior already, so the track record is pretty great, and I think them doing that has been super valuable. And 1 whistleblower seems much better than several converts is bad. I agree it can be terrible for mental health for some people, and people should take care of themselves.

Re (4), um, this is the EA Forum, we care about how good the money is. Besides crypto, I don't think there are many for many of the relevant people to make similar amounts of money on similar timeframes. Actually I think working at a lab early was an effective way to make money. A bunch of safety-concerned people for example have equity worth several millions to tens of millions, more than I think they could have easily earned elsewhere, and some are now billionaires on paper. And if AI has the transformative impact on the economy we expect, that could be worth way more (and it being worth more is correlated with it being needed more, so extra valuable); we are talking about the most valuable/powerful industry the world has ever known here, hard to beat that for making money. I don't think that makes it okay to lead large AI labs, but for joining early, especially doing some capabilities work that doesn't push the mos

I think the average EA worries too much about negative PR related to EA. I think this is a shame because EA didn't get to where it is now because it concerned itself with PR. It got here through good-old-fashioned hard work (and good thinking ofc).

Two examples:

1. FTX.

2. OpenAI board drama.

On the whole, I think there was way too much time spent thinking and talking about these massive outliers and it would have been better if 95%+ of EAs put their head down and did what they do best - get back to work.

I think it is good to discuss and take action... (read more)

I really like this ad strategy from BlueDot. 5 stars.

90% of the message is still x / c-risk focussed, but by including "discrimination" and "loss of social connection" they're clearly trying to either (or both);

create a big tent

nudge people that are in "ethics" but sympathetic to x / c-risk into x / c-risk

This feels like misleading advertising to me, particularly "Election interference" and "Loss of social connection". Unless bluedot is doing some very different curriculum now and have a very different understanding of what alignment is. These bait-and-switch tactics might not be a good way to create a "big tent".

I expect that over the next couple of years GenAI products will continue to improve, but concerns about AI risk won't. For some reason we can't "feel" the improvements. Then (e.g. around 2026) we will have pretty capable digital agents and there will be a surge of concern (similar to when ChatGPT was released, maybe bigger), and then possibly another perceived (but not real) plateau.

We seem to be boiling the frog, however I'm optimistic (perhaps naively) that GPT voice mode may wake some people up. "Just a chatbot" doesn't carry quite the same weight when it's actually speaking to you.

2

titotal

The concerns in this case seem to be proportional to the actual real world impact: The jump from chatgpt and midjourney not existing to being available to millions was extremely noticeable: suddenly the average person could chat with a realistic bot and use it to cheat on their homework, or configure up a realistic looking image from a simple text prompt.

In contrast for the average person, not a lot has changed from 2022 to now. The chatbots give out less errors and the images are a lot better, but they aren't able to accomplish significantly more actual tasks than they were in 2022. Companies are shoving AI tools into everything, but people are mostly ignoring them.

Even though I've been in the AI Safety space for ~ 2 years, I can't shake the feeling that every living thing dying painlessly in its sleep overnight (due to AI killing us) isn't as bad as (i.e. is 'better' than) hundreds of millions of people living in poverty and/or hundreds of billions of animals being tortured.

This makes me suspicious of my motivations. I think I do the work partly because I kinda feel the loss of future generations, but mainly because AI Safety still feels so neglected (and my counter factual impact here is larger).

Thanks for sharing. I suspect most of the hundreds of millions of people living in poverty would disagree with you, though, and would prefer not to painlessly die in their sleep tonight.

I think its possible we're talking passed each other?

5

NickLaing

I don't think he's talking past you. His point seems that the vast majority of the hundreds of millions of people living in poverty both have net positive lives, and don't want to die.

Even with a purely hedonistic outlook, it wouldn't be better for their lives to end.

Unless you are not talking about the present, but a future far worse than today's situation?

1

yanni kyriacos

I'm saying that on some level it feels worse to me that 700 million people suffer in poverty than every single person dying painlessly in their sleep. Or that billions of animals are in torture factories. It sounds like I'm misunderstanding Jason's point?

2

NickLaing

I would contend they are not "suffering" in poverty overall, because most of their lives are net positive. There may be many struggles and their lives are a lot harder than ours, but still better than not being alive at all.

I agree with you on the animals in torture factories, because their lives are probably net negative unlike the 700 million in poverty.

3

titotal

If AI actually does manage to kill us (which I doubt), It will not involve everybody dying painlessly in their sleep. That is an assumption of the "FOOM to god AI with no warning" model, which bears no resemblance to reality.

The technology to kill everyone on earth in their sleep instantaneously does not exist now, and will not exist in the near-future, even if AGI is invented. Killing everyone in their sleep is orders of magnitude more difficult than killing everyone awake, so why on earth would that be the default scenario?

2

Stephen Clare

I think you have a point with animals, but I don't think the balance of human experience means that non-existence would be better than the status quo.

Will talks about this quite a lot in ch. 9 of WWOTF ("Will the future be good or bad?"). He writes:

And, of course, for people at least, things are getting better over time. I think animal suffering complicates this a lot.

Attention: AI Safety Enthusiasts in Wellington (and New Zealand)

I'm pleased to announce the launch of a brand new Facebook group dedicated to AI Safety in Wellington: AI Safety Wellington (AISW). This is your local hub for connecting with others passionate about ensuring a safe and beneficial future with artificial intelligence / reducing x-risk.To kick things off, we're hosting a super casual meetup where you can:

Meet & Learn: Connect with Wellington's AI Safety community.