Edit to add (9/1/2023): This post was written quickly and I judged things prematurely. I also regret not reaching out to Effective Ventures before posting it. Regarding my current opinion on the Abbey: I don't have anything really useful to say that isn't mentioned by others. The goal of this post was to ask a question and gather information, mostly because I was very surprised. I don't have a strong opinion on the purchase anymore and the ones I have are with high uncertainty. More thoughts in my case for transparent spending.

Yesterday morning I woke up and saw this tweet by Émile Torres: https://twitter.com/xriskology/status/1599511179738505216

I was shocked, angry and upset at first. Especially since it appears that the estate was for sale last year for 15 million pounds: https://twitter.com/RhiannonDauster/status/1599539148565934086

I'm not a big fan of Émile's writing and how they often misrepresent the EA movement. But that's not what this question is about, because they do raise a good point here: Why did CEA buy this property? My trust in CEA has been a bit shaky lately, and this doesn't help.

Apparently it was already mentioned in the New Yorker piece: https://www.newyorker.com/magazine/2022/08/15/the-reluctant-prophet-of-effective-altruism#:~:text=Last year%2C the Centre for Effective Altruism bought Wytham Abbey%2C a palatial estate near Oxford%2C built in 1480. Money%2C which no longer seemed an object%2C was increasingly being reinvested in the community itself.

"Last year, the Centre for Effective Altruism bought Wytham Abbey, a palatial estate near Oxford, built in 1480. Money, which no longer seemed an object, was increasingly being reinvested in the community itself."

For some reason I glanced over it at the time, or I just didn't realize the seriousness of it.

Upon more research, I came across this comment by Shakeel Hashim: "In April, Effective Ventures purchased Wytham Abbey and some land around it (but <1% of the 2,500 acre estate you're suggesting). Wytham is in the process of being established as a convening centre to run workshops and meetings that bring together people to think seriously about how to address important problems in the world. The vision is modelled on traditional specialist conference centres, e.g. Oberwolfach, The Rockefeller Foundation Bellagio Center or the Brocher Foundation.

The purchase was made from a large grant made specifically for this. There was no money from FTX or affiliated individuals or organizations." https://forum.effectivealtruism.org/posts/Et7oPMu6czhEd8ExW/why-you-re-not-hearing-as-much-from-ea-orgs-as-you-d-like?commentId=uRDZKw24mYe2NP4eq

I'm very relieved to hear money from individual donors wasn't used. And the <1% suggests 15 million pounds perhaps wasn't spent. Still, I'd love to hear and understand more about this project and why CEA thinks it's cost-effective. What is the EV calculation behind it?

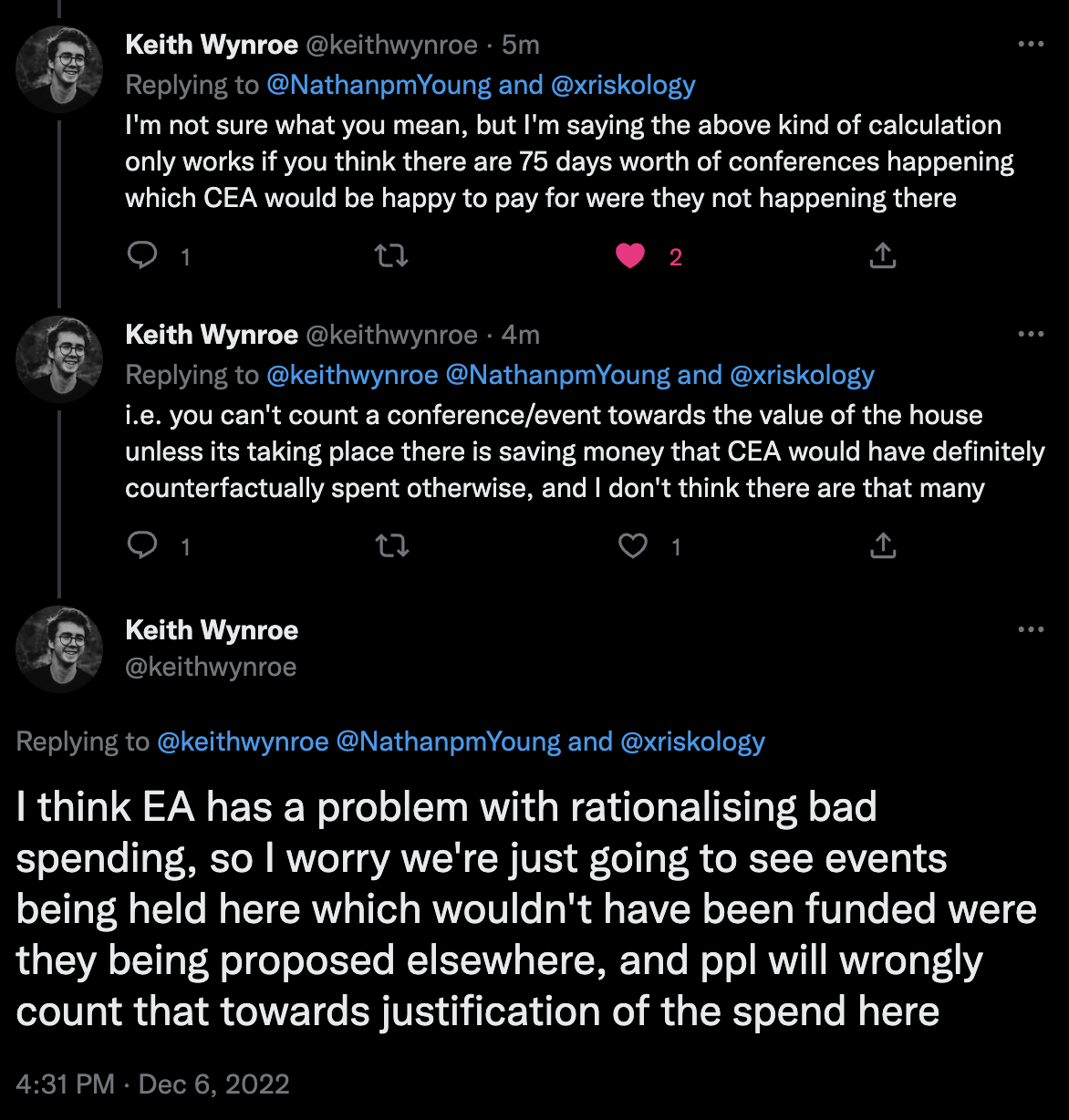

Like the New Yorker piece points out, with more funding there has been a lot of spending within the movement itself. And that's fine, great even. This way more outreach can be done and the movement can grow. But we don't want to be too self-serving, and I'm scared too much of this thinking will lead to rationalizing lavish expenses (and I'm afraid this is already happening). There needs to be more transparency behind big expenses.

Edit to add: If this expense has been made a while back, why not announce it then?

This comment sounds qualitatively reasonable, but it needs a quantitative complement - it could have been made virtually verbatim had the cost been £1.5m or £150m. I would like to hear the case for why it was actually worth £15m.

Also, a lot of people are talking about 'optics', with the implication that the irrational public will misunderstand the +EV of such a decision. But 'bad optics' don't come from nowhere - they come from a very reasonable worry that over time, people who influence a lot of money have some risk of, if not becoming corrupt, at least getting carried away with that influence and rationalising away things like this.

I think we should always take such possibilities seriously, not to imply anyone has actually done anything wildly irresponsible, but to insure against anyone doing so - and to keep grey areas as thin as possible. And I'm increasingly worried that CEA are seriously undertransparent in ways that suggest they don't think such risks could materialise - which increases my credence that they could. So while I could be convinced this was a reasonable use of funds, I think the decision not to 'hype' it builds a dangerous precedent.

You can check how many events GPI, FHI, and CEA have run in Oxford, requiring renting hotels, etc., and the associated costs. I know that GPI runs at least a couple such events per year. Given that, I think that over the next 10-20 years, £15m isn't outside the realm of plausible direct costs saved, especially if it's available for other groups to rent in order to help cover costs.

That said, the cost-benefit analysis could be more transparent. On the other hand, I don't think that private donors should be required to justify their decisions, regardless of the vehicle used.

But I do think that CEA is the wrong place for this to be done, given that they aren't even likely to be a key user of the space.(Edit: Owen's explanation, that this was done by the parent org of CEA, means I will withdraw the last claim.)I accept that it's plausibly in the realm, but that's not very helpful for knowing whether it's actually worthwhile - plausibility is a very low bar.

This doesn't seem like a good blanket policy. If private donors can use a charity to buy large luxury goods, it raises worries about that charity becoming a tax haven, or a reward for favours, or any other number of such hard-to-predefine but questionable activities. There are legal implications around charities taking too much of their money from a single donor for exactly that reason.

I don't think we're there yet, but, per above, I would like to see more discussion from CEA of the risks associated with moving in that direction.

Agree. I don't mind the idea of a sort of EA special projects orgs that has relatively high autonomy, but I don't want that org to also be the face of the community - as I understand it, that's basically how we ended up with FTXgate. We'd also probably want them to source funding from a wide range of sources to avoid the unilateralists' curse, which this situation is at least flirting with.

I'm telling you that, as someone who hasn't ever worked for or with CEA directly, I spoke with a couple people about this months before it happened. Clearly, plenty of people were aware, and discussed this - and I didn't know the price tag, but thought that a center for retreats focused on global priorities and related topics near Oxford sounded like a very good idea. I still think it is, and honestly don't think that it's unreasonable as a potential investment into priorities research. Of course, given the current post-FTX situation, it would obviously not have been considered if the project was being proposed today.

Can you say who funded the dedicated grant?

I feel like this is pretty important. I think this is basically fine if it's a billionaire who thinks CEA needs real estate, and less fine if it is incestuous funding from another EA group.

I think the key question is whether the money would counterfactually have been available for another purpose. OCB half-implies it wouldn't have been by saying that buying the property fulfiled an obligation to the donor, but then I'm confused by the claim "we retain the underlying asset". If EVF holds the asset subject to an obligation only to use it as a specialist conference centre, it's unable to realise the value. On the other hand, it would seem surprising if the donation was made on the condition that it must be used to buy the building, but then EVF could do whatever it liked with it (including immediately reselling it).

If EVF is in fact able to resell the building now, then the argument that it was an ear-marked donation is weak, because EVF is making a decision now to hold the asset rather than sell it to raise funds for other EA causes.

It was OpenPhil, see here.

Owen - this sounds totally reasonable to me.

Max Planck Institutes had a dedicated conference center in the Alps (Schloss Ringberg) that is hugely inspirational, and that promotes intensive collaboration, brain-storming, and discussion very effectively.

Likewise for the Center for Advanced Study in the Behavioral Sciences at Stanford -- the panoramic views over the San Francisco Bay, the ease of both formal and informal interaction, and the optimal degree of proximity to the main campus (near, but not too near), promote very good high-level thinking and exchange of ideas.

I've been to about 70 conferences in my academic career, and I'm noticed that the aesthetics, antiquity, and uniqueness of the venue can have a significant effect on the seriousness with which people take ideas and conversations, and the creativity of their thinking. And, of course, it's much easier to attract great talent and busy thinkers to attractive venues. Moreover, I suspect that meeting in a building constructed in 1480 might help promote long-termism and multi-century thinking.

It's hard to quantify the effects that an uplifting, distinctive, and beautiful venue can have on the quality and depth of intellectual and moral collaboration. But I think it's a real effect. And Wytham Abbey seems, IMHO, to be an excellent choice to capitalize on that effect.

The problem to me seems to be that "being hard to quantify" in this case very easily enables rationalizing spending money on fancy venues. I'm also not convinced that non-EA institutions spending money on fancy venues is a good argument for also doing so or an argument that fancy venues enable better research. These institutions probably just use fancy venues because it is self serving. As they don't usually promote doing the most good by being effective, I guess that nobody cares much that they do that.

Personally, I think that a certain level of comfort is helpful, e.g. having single / double rooms for everybody so they can sleep well or don't needing to cook etc. However, I'm very skeptical of anything above that being worth the money.

I don't want to be adversarial, but I just have to note how much your comment reads to me and other people I spoke to like motivated reasoning. I think it's very problematic if EA advocates for cost effectiveness on the one hand and then lightly spends a lot of money on fancy stuff which seems self serving.

Agreed. The whole founding insight of the EA movement was the importance of rigorously measuring value for money. The same logic is used to justify every warm and fuzzy but low value charity. And it's entirely reasonable to be very worried when major figures in the EA movement revert to that kind of reasoning when it's in their self interest.

First of all - I'm really glad you wrote this comment. This is exactly the kind of transparency I want to see from EA orgs.

On the other hand, I want to push back against your now last paragraph (on why you didn't write about this before). I strongly think that it's wrong to wait for criticism before you explain big and important decisions (like spending 15 million pounds on a castle). The fact that criticism arose here is basically random, and is a result of outside critics looking in. In a better state of affairs, you want the EA community to know about the things they need to look at and maybe criticise. Otherwise there's a big chance they'll miss things.

In other words, I think it's very important that major EA orgs proactively share the information and reasoning about big decisions.

This sort of comment sounds good in the abstract, but what specific process would you propose that you think would actually achieve this? CEA has to post all project proposals over a certain amount to the EA forum? Are people actually going to read them? What if they only appeal to specific funders? How much of a tax on the time of CEA staff are we willing to pay in order to get this additional transparency?

Personally, I think something like a quarterly report on incoming funds and outgoing expenses, ongoing projects and cost breakdowns, and expected and achieved outcomes would work very well. This is something I'd expect of any chariry or NGO that values effectiveness and empirical backing, and particularly from one that places it at the center of its mission statement, so I struggle to think of it as a "tax" on the time of CEA workers rather than something that should be an accepted and factored in cost of doing business.

The grandparent comment asks for decisions to be explained before criticism appears. Your proposal (which I do think is fairly reasonable) would not have helped in this case: Wytham Abbey would have got a nice explanation in the next quarterly report after it got done, i.e. far too late.

You would instead require ongoing, very proactive transparency on a per-decision basis in order to really pre-empt criticism.

I put a negative framing on it and you put a positive one, but it's a cost that prevents staff from doing other things with their time and so should be prioritised and not just put onto an unbounded queue of "stuff that should be done".

I think my broader frame around the issue affected how I read the parent comment. I took it as the problem being a general issue in EA transparency - my general thinking on a lot of the criticisms from within EA was something along the lines of the lack of transparency as a general issue is the larger problem, if EAs knew there would be a report/justification coming, it would not have been such an issue within the community. I do see your point now, although I do think there are some pretty easy ways around it, like determining a reasonably high bar on the basis of CEA's general spending-per-line-item that would necessitate a kind of "this is a big deal" announcement.

... (read more)Yes, that sounds about it. Although I would add decisions that are not very expensive but are very influential.

What do you mean?

A significant amount. This is well worth it. Although in practice I don't imagine there are that many decisions of this calibre. I would guess about 2-10 per year?

That's pretty unclear to me. We are in the position of maximum hindsight bias. An unusual and bad event has happened, that's the classic point at which people overreact about precautions.

I've been writing the same calls for transparency for months. This has nothing to do with FTX.

I'm not sure about this particular case, but I don't think the value of the property increasing over time is a generally good argument for why investments need not be publicly discussed. A lot of potential altruistic spending has benefits that accrue over time, where the benefits of money spent earlier outweighs the benefits of money spent later - as has been discussed extensively when comparing giving now vs. giving later.

The whole premise of EA is that resources should be spent in effective ways, and potential altruistic benefits is no excuse for an ineffective spending of money.

E.g., if EAs overpaid 30M£ for a property that resells at 15M£? I'd be a bit surprised they couldn't get a better deal, but I wouldn't feel concerned without knowing more details.

Seems to me that EA tends to underspend on this category of thing far more than they overspend, so I'd expect much more directional bias toward risk aversion than risk-seeking, toward naive virtue signaling over wealth signaling, toward Charity-Navigator-ish overhead-minimizing over inflated salaries, etc. And I naively expect EVF to err in this direction more than a lot of EAs, to over-scrutinize this kind of decision, etc. I would need more information than just "they cared enough about a single property with unusual features to overpay by 15M£" to update much from that prior.

We also have far more money right now than we know how to efficiently spend on lowering the probability that the world is destroyed. We shouldn't waste that money in large quantities, since efficient ways to use it may open up in the future; but I'd again expect EA to be drastically under-spending on weird-looking ways to use money to un-bottleneck us, as opposed to EA b... (read more)

Since EA isn't optimizing the goal "flip houses to make a profit", I expect us to often be willing to pay more for properties than we'd expect to sell them for. Paying 2x is surprising, but it doesn't shock me if that sort of thing is worth it for some reason I'm not currently tracking.

MIRI recently spent a year scouring tens of thousands of properties in the US, trying to find a single one that met conditions like "has enough room to fit a few dozen people", "it's legal to modify the buildings or construct a new one on the land if we want to", and "near but not within an urban center". We ultimately failed to find a single property that we were happy with, and gave up.

Things might be easier outside the US, but the whole experience updated me a lot about how hard it is to find properties that are both big and flexible / likely to satisfy more than 2-3 criteria at once.

At a high level, seems to me like EA has spent a lot more than 15M£ on bets that are vastly more uncertain and dependent-on-contested-models than "will we want space to house researchers or host meetings?". Whether discussion and colocation is useful is one of the only things I expect EAs to not disagree about; most other categories of activity depend on much more complicated stories, and are heavily about placing bets on more specific models of how the future is likely to go, what object-level actions to prioritize over other actions, etc.

Can we all just agree that if you’re gonna make some funding decision with horrendous optics, you should be expected to justify the decision with actual numbers and plans?

Would be nice if we actually knew how many conferences/retreats were going to be held at the EA castle.

It might be justifiable (I got a tremendous amount of value being in Berkeley and London offices for 2 month stints), but now we’re here talking about it, and it obviously looks bad to anyone skeptical about EA. Some will take it badly regardless, but come on. Even if other movements/institutions way overspend on bad stuff, let’s not use that as an excuse in EA.

The “EA will justify any purchase for the good of humanity” argument will just continue to pop up. I know many EAs who are aware of this and constantly concerned about overspending and rationalizing a purchase. As much as critics act like this is never a consideration and EAs are just naively self-rationalizing any purchase, it’s certainly not the case for most EAs I’ve met. It’s just that an EA castle with very little communication is easy ammo for critics when it comes to rationalizing purchases.

One failed/bad project is mostly bad for the people involved, but reputational risk is bad for the entire movement. We should not take this lightly.

Thanks for sharing your detailed thought process Owen, and I definitely appreciate the penultimate paragraph.

Sounds like a reasonable decision to me, but I do wonder why the reasoning behind such large and not immediately obvious decisions isn't communicated publicly more often.

Totally agree, as long as you give people the opportunity to figure out why you think it's good.

Anyway, thanks for clarifying!

In general I would agree that it's better to do what is good rather than what looks good. However, when you are the face of a global movement, optics have a meaningful financial implication. Imagine if this bad press made 1 billionaire 0.1% less likely to get involved with EA. That calculation would dominate any potential efficiency savings from insourcing a service provider.

I used to think this and I increasingly don't. Doing good thing is what we're all about. Doing good things even if it looks bad in the tabloid press is good publicity to the people who actually care about doing good, and they're more important to us than the rest.

I think an EA that was weirder and more unapologetic about doing its stuff attracts more of the right kind of people and can generally get on with things more than an EA that frantically tries to massage it's optics to appeal to everyone.

I guess I've gone off into the abstract argument about whether we should care about optics or not. I don't mean to assert that buying Wytham Abbey was a good thing to do, I just think that we should argue about whether it was a good thing to do, not whether it looks like a good thing to do.

I think this kind of reasoning is difficult to follow in practice, and likely to do more harm than good. Eg, I expect some billionaires are drawn to a movement that says fuck PR and actually tries to do what's important - what if trying to account for PR has a 0.1% chance of putting off those billionaires? Etc.

At the very least, "do what is actually good rather than just what looks good" seems like a valid philosophy to follow if trying to do good, even after accounting for optics - trying to account for optics can easily be misleading, paralysing, etc.

This is fair, and I don't want to argue that optics don't matter at all or that we shouldn't try to think about them.

My argument is more that actually properly accounting for optics in your EV calculations is really hard, and that most naive attempts to do so can easily do more harm than good. And that I think people can easily underestimate the costs of caring less about truth or effectiveness or integrity, and overestimate the costs of being legibly popular or safe from criticism. Generally, people have a strong desire to be popular and to fit in, and I think this can significantly bias thinking around optics! I particularly think this is the case with naive expected value calculations of the form "if there's even a 0.1% chance of bad outcome X we should not do this, because X would be super bad". Because it's easy to anchor on some particularly salient example of X, and miss out on a bunch of other tail risk considerations.

The "annoying people by showing that we care more about style than substance" was an example of a counter-veiling consideration that argues in the opposite direction and could also be super bad.

This argument is motivated by the same reasoning as the "don't kill people to steal their organs, even if it seems like a really good idea at the time, and you're confident no one will ever find out" argument.

A large portion of your rationale is based on the intellectually stimulating effects of being surrounded by nice things. Do you think the people in the building will feel great when there's such negative media coverage, and they feel the guilt of such an opulent purchase? If I were invited to this place, I'd feel uncomfortable and guilty all the time. There's already a bunch of negative media coverage. It's not going to stop. And it's not going to make the program participants feel inspired.

While I understand this sentiment, optics can sometimes matter much more than you may at first expect. In this specific case, the kneejerk response of many people on social media of this seeming incongruity (a seemingly extravagant purchase by a main EA org) can potentially cement negative sentiment. By itself, maybe it's not that bad. But in combination with the other previous bad press we have from the FTX debacle, people will get in their heads that "EA = BAD". I'm literally seeing major philosophers who might otherwise be receptive to EA being completely turned off because of tweets about Wytham Abbey.

This isn't to say that the purchase shouldn't have been made. But you specifically said that you think the general rule should be that we make decisions about what we think is good rather than by what looks good. While technically I agree with this, I think that blindly following such a rule puts us in a state of mind where we are at risk of underestimating just how bad optics can become.

I can see this point, but I'm curious - how would you feel about the reverse? Let's say that CEA chose not to buy it, and instead did conferences the normal way. A few months later, you're talking to someone from CEA, and they say something like:

Yeah, we were thinking of buying a nice place for these retreats, which would have been cheaper in the long run, but we realised that would probably make us look bad. So we decided to eat the extra cost and use conference halls instead, in order to help EA's reputation.

Would you be at all concerned by this statement, or would that be a totally reasonable tradeoff to make?

+1 to Jay's point. I would probably just give up on working with EAs if this sort of reasoning were dominant to that degree? I don't think EA can have much positive effect on the world if we're obsessed with reputation-optimizing to that degree; it's the sort of thing that can sound reasonable to worry about on paper, but in practice tends to cause more harm than good to fixate on in a big way.

(More reputational harm than reputational benefit, of the sort that matters most for EA's ability to do the most good; and also more substantive harm than substantive benefit.

Being optics-obsessed is not a good look! I think this is currently the largest reputational problem EA currently actually faces: we promote too much of a culture of fearing and obsessing over optics and reputational risks.)

This comment suggests that renting conference venues in Oxford can be pretty expensive:

https://forum.effectivealtruism.org/posts/xof7iFB3uh8Kc53bG/why-did-cea-buy-wytham-abbey?commentId=3yeffQWcRFvmeteqc

Your cost estimates seems to be in the wrong order of magnitude.

FWIW, as someone who also works in communications, I strongly disagree here and think EA spends massively too much of its mental energy thinking about optics.

More specifically:

I tend to criticize virtue ethics and deontology a lot more than I praise them -- IMO these are approaches that often go badly wrong. But I think PR (for a community like EA) is an area where deontology-like adherence to "behave honestly and with integrity" and virtue-ethics-like focus on "be the sort of person internally who you would find most admirable and virtuous" tends to have far better consequences than "select the action that naively looks as though it will make others like you the most".

If you're an EA and you want to improve EA's reputation, my main advice to you is going to look very virtue-ethics-flavored: be brave, be thoughtful, be discerning, be honest, be honorable, be fair, be compassionate, be trustworthy; and insofar as you're not those things, be honest about it (because honesty is on the list, and is paramount to trusting everything else about your apparent virtues); and let your reputation organically follow from the visible signs of those internal traits of yours, rather than being a t... (read more)

My take is about 90% in agreement with this.

The other 10% is something like: "But sometimes adding time and care to how, when, and whether you say something can be a big deal. It could have real effects on the first impressions you, and the ideas and communities and memes you care about, make on people who (a) could have a lot to contribute on goals you care about; (b) are the sort of folks for whom first impressions matter."

10% is maybe an average. I think it should be lower (5%?) for an early-career person who's prioritizing exploration, experimentation and learning. I think it should be higher (20%?) for someone who's in a high-stakes position, has a lot of people scrutinizing what they say, and would lose the opportunity to do a lot of valuable things if they substantially increased the time they spent clearing up misunderstandings.

I wish it could be 0% instead of 5-20%, and this emphatically includes what I wish for myself. I deeply wish I could constantly express myself in exploratory, incautious ways - including saying things colorfully and vividly, saying things I'm not even sure I believe, and generally 'trying on' all kinds of ideas and messages. This is my natural way of being; but I feel like I’ve got pretty unambiguous reasons to think it’s a bad idea.

If you want to defend 0%, can you give me something here beyond your intuition? The stakes are high (and I think "Heuristics are almost never >90% right" is a pretty good prior).

Here's an explanation of some of the reasons it's often harmful for a community to fixate on optics, even though optics is real: https://www.lesswrong.com/posts/Js34Ez9nrDeJCTYQL/politics-is-way-too-meta

It also comes off as quite manipulative and dishonest, which puts people off. There are many people who'll respect you if you disagree with them but state your opinion plainly and clearly, without trying to hide the weird or objectionable parts of your view. There are relatively few who will respect you if they find out you tried to manipulate their opinion of you, prioritizing optics over substance.

And this seems especially harmful for EA, whose central selling point is "we're the people who try to actually do the most good, not just signal goodness or go through the motions". Most public conversations about EA optics are extremely naive on this point, treating it as a free action for EAs to spend half their time publicly hand-wringing about their reputations.

What sort of message do you think that sends to people who come to the EA Forum for the first time, interested in EA, and find the conversations dominated by reputation obsession, panicky glances at the news cycle, complicated strategies to toss first-order utility out the window for the sake of massaging outsiders' views of EA, etc.? Is that the best possible public face you could pick for EA?

The main problem with lavishness, IMHO, is not optics per se, but rather that it's extremely easy for people to trick themselves into believing that spending money on their own comfort/lifestyle/accommodations is net-good-despite-looking-bad (for productivity reasons or whatever). This generalizes to the community level.

(To be clear, this is not to say that we should never follow such reasoning. It's just a serious pitfall. This is also not original—others have certainly brought this up.)

Also, I imagine having communicated the reasoning behind the purchase publicly before the criticisms would have gone some way in reducing the bad optics, especially for onlookers who were inclined to spend a little bit of time to understand both perspectives. So thinking more about the optics doesn't necessarily lead you to not do the thing.

"I did feel a little nervous about the optical effects"

Was there no less-extravagant-looking conference space for sale?

This is not a comment on the cheapness point, but in case this feels relevant, private vehicles are not necessary to access this venue― from the Oxford rail station you can catch public buses that drop you off about a 2-minute walk from the venue. It's a 20 minute bus ride, and the buses don't come super often (every 60 minutes, I think?) but I just wanted to be clear that you can access this space via public transport.

Presumably it would be easy to arrange a conference minibus to shuttle attendees to and from the station. This seems like the least of the project's problems.

A typical researcher might make £100,000 a year. £15,000,000 is roughly £1,000,000 a year if invested in the stock market. So you could hire 5 researchers to work full-time, in perpetuity.

Conferences are cool, but do you really think they generate as much research as 5 full time researchers would? As a researcher, I can tell you flat-out the answer is no. I could do much more with 5 people working for me than I could by going to even a thousand conferences.

Thanks, this is indeed helpful. I would also like to know though, what made this property “the most appropriate” out of the three in a bit greater detail if possible. How did its cost compare to the others? Its amenities? I think many people in this thread agree that it might have been worth it to buy some center like this, but still question whether this particular property was the most cost effective one.

Thanks, I appreciate the added information! I'm not sure I'm convinced that this was worthwhile, but I feel like I now have a much better understanding of the case for it.

Thanks for explaining!

I like this point.

I think this was a terrible idea

I think you've overestimated the value of a dedicated conference centre. The important ideas in EA so far haven't come from conversations over tea and scones at conference centres but are either common sense ("do the most good", "the future matters") or have come from dedicated field trials and RCTs.

I also think you've underestimated the damage this will do to the EA brand. The hummus and baguettes signal an earnestness. Abbey signals scam.

I'm confident that this will be remembered as one of CEA's worst decisions.

How much are electricity, maintenance and property tax for this venue? Historic building may require expensive restoration and are subject to complex regulation.

I think it’s plausible that this purchase saves money, but I strongly disagree with your view of optics.

“think it’s better to let decisions be guided less by what we think looks good, and more by what we think is good”

What looks good has important effects on EA community building, the diffusion of EA ideas and on the ability to promote EA ideas in politics, especially over the longer term.

Whether a decision looks good, i.e, the indirect, long term effects of the decision on EA’s reputation, is a very important factor on determining on whether a decision is... (read more)

I’m not qualified to comment on the calculations but did you hire a real estate consultant and venue manager to advise?